CloudHub is MuleSoft’s integration platform as a service (iPaaS) that enables the deployment and management of integration solutions in the cloud. Runtime Manager, CloudHub’s management tool, provides an integrated set of logging tools that allow support and operations staff to monitor and troubleshoot application logs of deployed applications.

Currently, application log entries are kept for 30 days or until they reach a max size of 100 MB. Often we are required to keep these logs for greater periods of time for auditing or archiving purposes. Overly chatty applications (applications that write log entries frequently) may find their logs only covering a few days restricting the troubleshooting window even further. Runtime Manager allows portal users to manually download log files via the browser, however no automated solution is provided out-of-the-box.

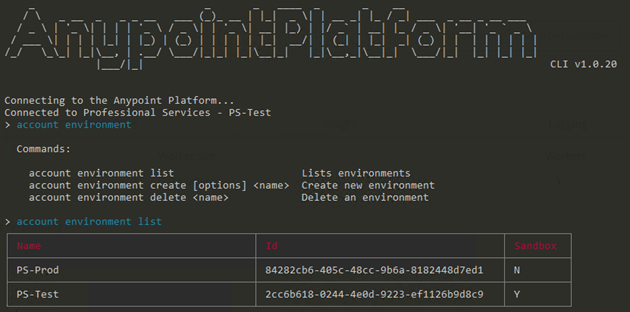

The good news is, the platform does provide both a command line tool and management API that we can leverage. Leaving the CLI to one side for now, the platform’s management API looks promising. Indeed, a search in Anypoint Exchange also yields a ready built CloudHub Connector we could leverage. However upon further investigation, the connector doesn’t meet all our requirements. The CloudHub Connector does not appear to support different business groups and environments so using it to download logs for applications deployed to non-default environments will not work (at least in the current version). The best approach will be to consume the management APIs provided by the Anypoint Platform directly. RAML definitions have been made available making consuming them within a mule flow very easy.

Solution overview

In this post we’ll develop a CloudHub application that is triggered periodically to loop through a collection of target applications, connect to the Anypoint Management APIs and fetch the current application log for each deployed instance. The downloaded logs will be compressed and sent to an Amazon S3 bucket for archiving.

Putting the solution together:

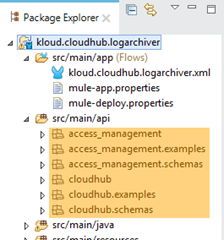

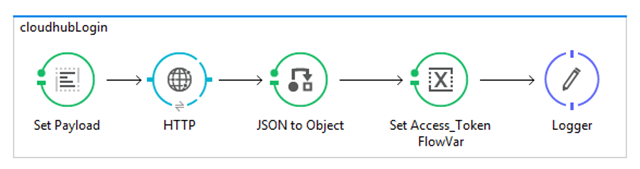

We start by grabbing the RAML for both the Anypoint Access Management API and the Anypoint Runtime Manager API and bring them into the project. The Access Management API provides the authentication and authorisation operations to login and obtain an access token needed in subsequent calls to the Runtime Manager API. The Runtime Manager API provides the operations to enumerate the deployed instances of an application and download the application log.

Download and add the RAML definitions to the project by extracting them into the ~/src/main/api folder.

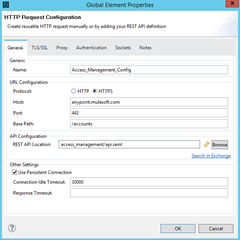

To consume these APIs we’ll use the HTTP connector so we need to define some global configuration elements that make use of the RAML definitions we just imported.

Note: Referencing these directly from Exchange currently throws some RAML parsing errors.

So to avoid this, we download manually and reference our local copy of the RAML definition. Obviously we’ll need to update this as the API definition changes in the future.

To provide simple multi-value configuration support I have used a simple JSON structure to describe a collection of applications we need to iterate over.

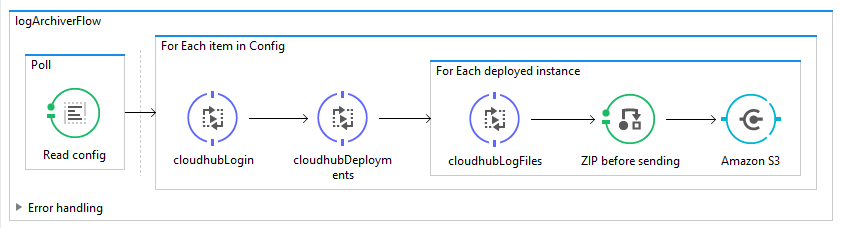

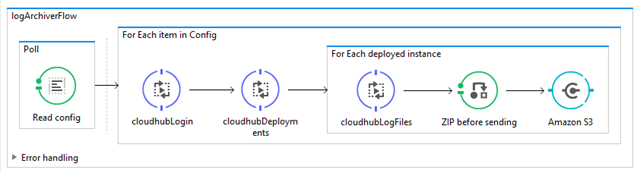

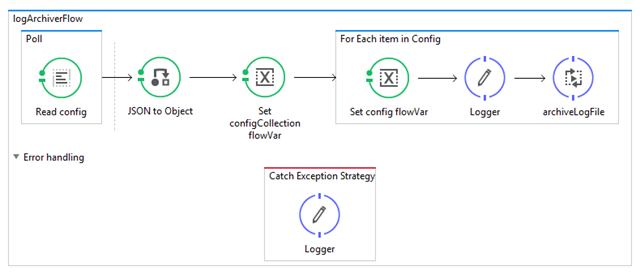

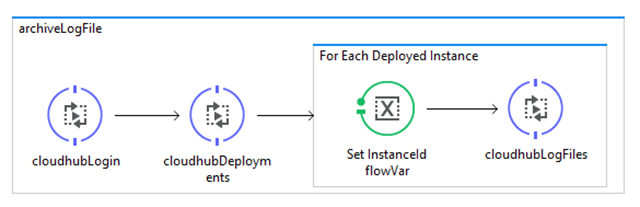

Next, create our top level flow that is triggered periodically to read and parse our configuration setting into a collection that we can iterate over to download the application logs.

Now, we create a sub-flow that describes the process of downloading application logs for each deployed instance. We first obtain an access token using the Access Management API and present that token to the Runtime Manager API to gather details of all deployed instances of the application. We then iterate over that collection and call the Runtime Manager API to download the current application log for each deployed instance.

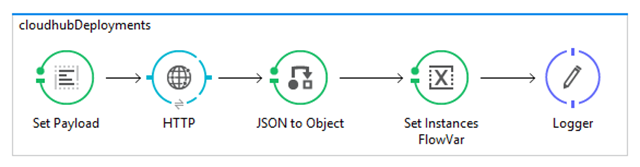

Next we add the sub-flows for consuming the Anypoint Platform APIs for each of the in-scope operations

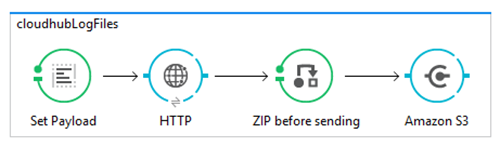

In this last sub-flow, we perform an additional processing step of compressing (zip) the log file before sending to our configured Amazon S3 bucket.

The full configuration for the workflow can be found here.

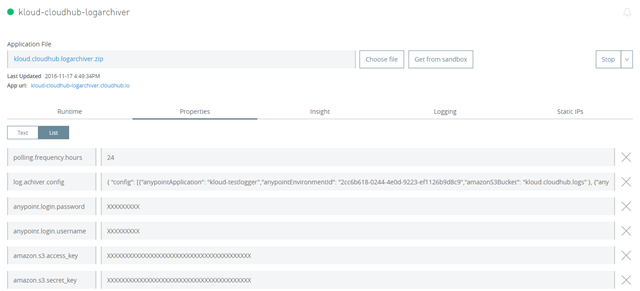

Once packaged and deployed to CloudHub we configure the solution to archive application logs for any deployed CloudHub app, even if they have been deployed into environments other than the one hosting the log archiver solution.

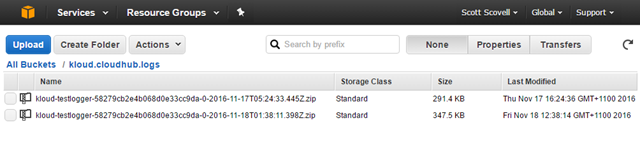

After running the solution for a day or so and checking the configured storage location we can confirm logs are being archived each day.

Known limitations:

- The Anypoint Management API does not allow downloading application logs for a given date range. That is, each time the solution runs a full copy of the application log will be downloaded. The API does support an operation to query the logs for a given date range and return matching entries as a result set but that comes with additional constraints on result set size (number of rows) and entry size (message truncation).

- The RAML definitions in Anypoint Exchange currently do not parse correctly in Anypoint Studio. As mentioned above, to work around this we download the RAML manually and bring it into the project ourselves.

- Credentials supplied in configuration are in plain text. Suggest creating a dedicated Anypoint account and granting permissions to only the target environments.

In this post I have outlined a solution that automates the archiving of your CloudHub application log files to external cloud storage. The solution allows periodic scheduling and multiple target applications to be configured even if they exist in different CloudHub environments. Deploy this solution once to archive all of your deployed application logs.