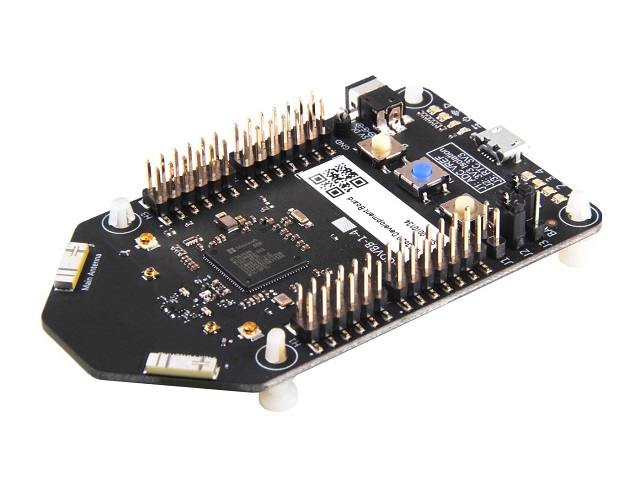

Azure Sphere – Initial Setup, Configuration and First Impressions

In April this year, Microsoft announced Azure Sphere. This was the same week as I’d be preparing for a presentation I was giving on Azure IoT at the Sydney location for the Global Azure Bootcamp. When pre-orders became available from Seeed Studio I naturally signed up as I’ve previously bought many IoT related pieces of hardware from Seeed Studio.

Fast forward to this week and the Azure Sphere MT3620 device shipped. It’s a long weekend here in Sydney Australia and delivery wasn’t due until after the long weekend, but by some miracle the packaged was delivered on the Friday by DHL after only leaving China 3-4 days earlier.… [Keep reading] “Azure Sphere – Initial Setup, Configuration and First Impressions”