Azure Functions provide ServiceBus based trigger bindings that allow us to process messages dropped onto a SB queue or delivered to a SB subscription. In this post we’ll walk through creating an Azure Function using a ServiceBus trigger that implements a configurable message retry pattern.

Note: This post is not an introduction to Azure Functions nor an introduction to ServiceBus. For those not familiar will these Azure services take a look at the Azure Documentation Centre.

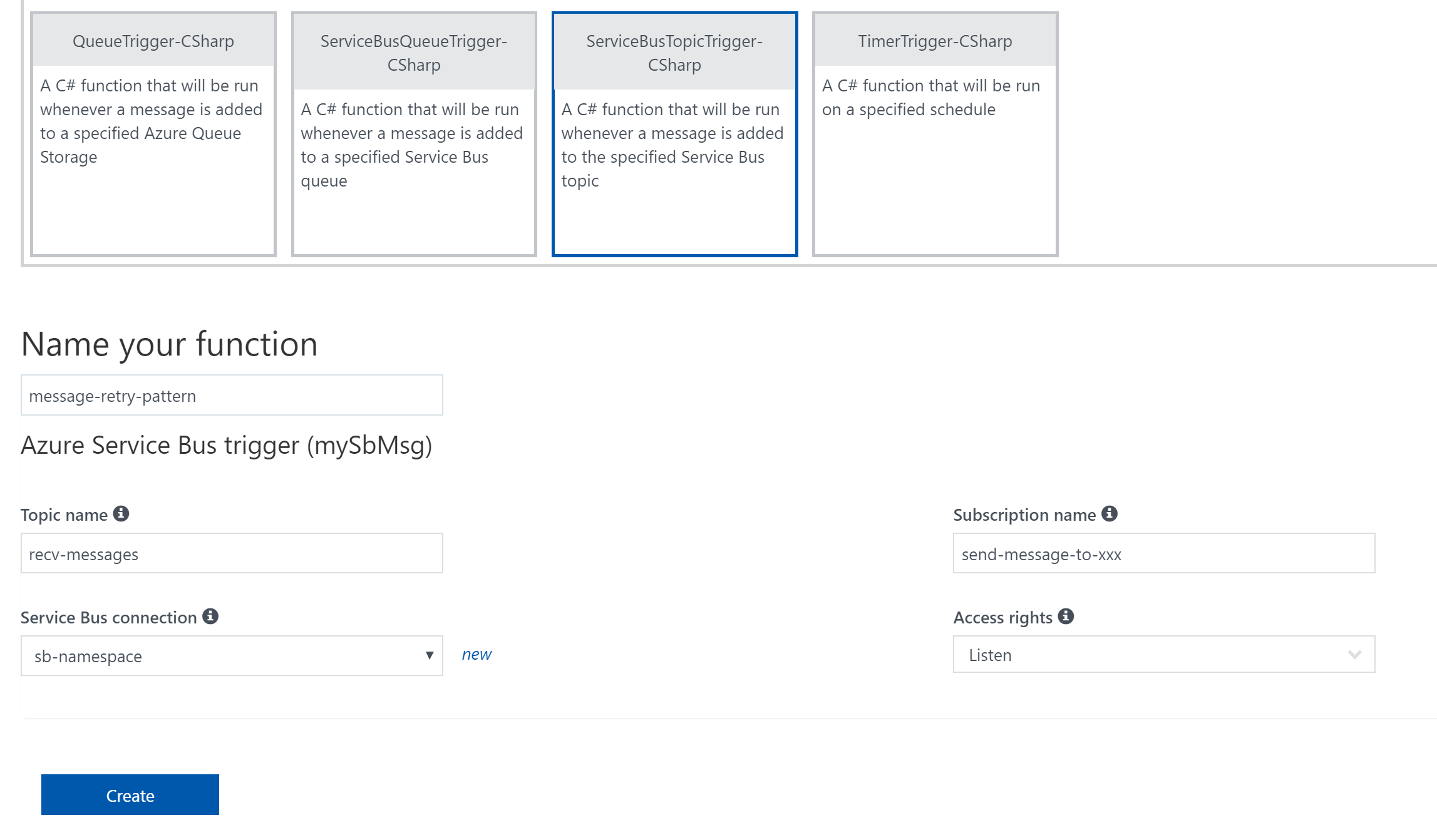

Let’s start by creating a simple C# function that listens for messages delivered to a SB subscription.

Azure Functions provide a number of ways we can receive the message into our functions, but for the purpose of this post we’ll use the BrokeredMessage type as we will want access to the message properties. See the above link for further options for receiving messages into Azure Functions via the ServiceBus trigger binding.

To use BrokeredMessage we’ll need to import the Microsoft.ServiceBus assembly and change the input type to BrokeredMessage.

If we did nothing else, our function would receive the message from SB, log its message ID and remove it from the queue. Actually, the SB trigger peeks the message off the queue, acquiring a peek lock in the process. If the function executes successfully, the message is removed from the queue. So what happens when things go pear shaped? Let’s add a pear and observe what happens.

Note: To send messages to the SB topic, I use Paolo Salvatori’s ServiceBus Explorer. This tool allows us to view queue and message properties mentioned in this post.

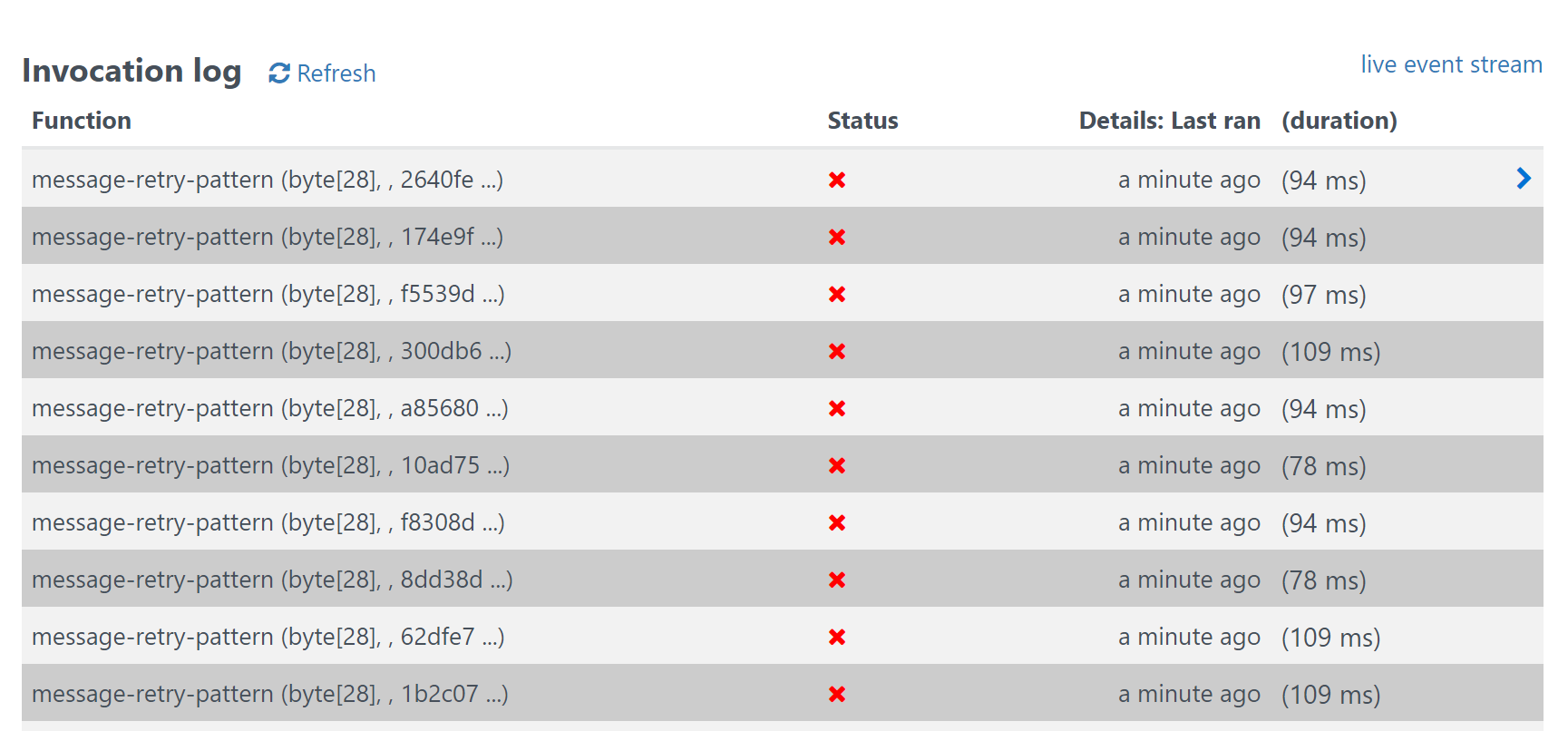

Notice the function being triggered multiple times. This will continue until the SB queue’s MaxDeliveryCount is exceeded. By default, SB queues and topics have a MaxDeliveryCount of 10. Let’s output the delivery count in our function using a message property on the BrokeredMessage class so we can observe this in action.

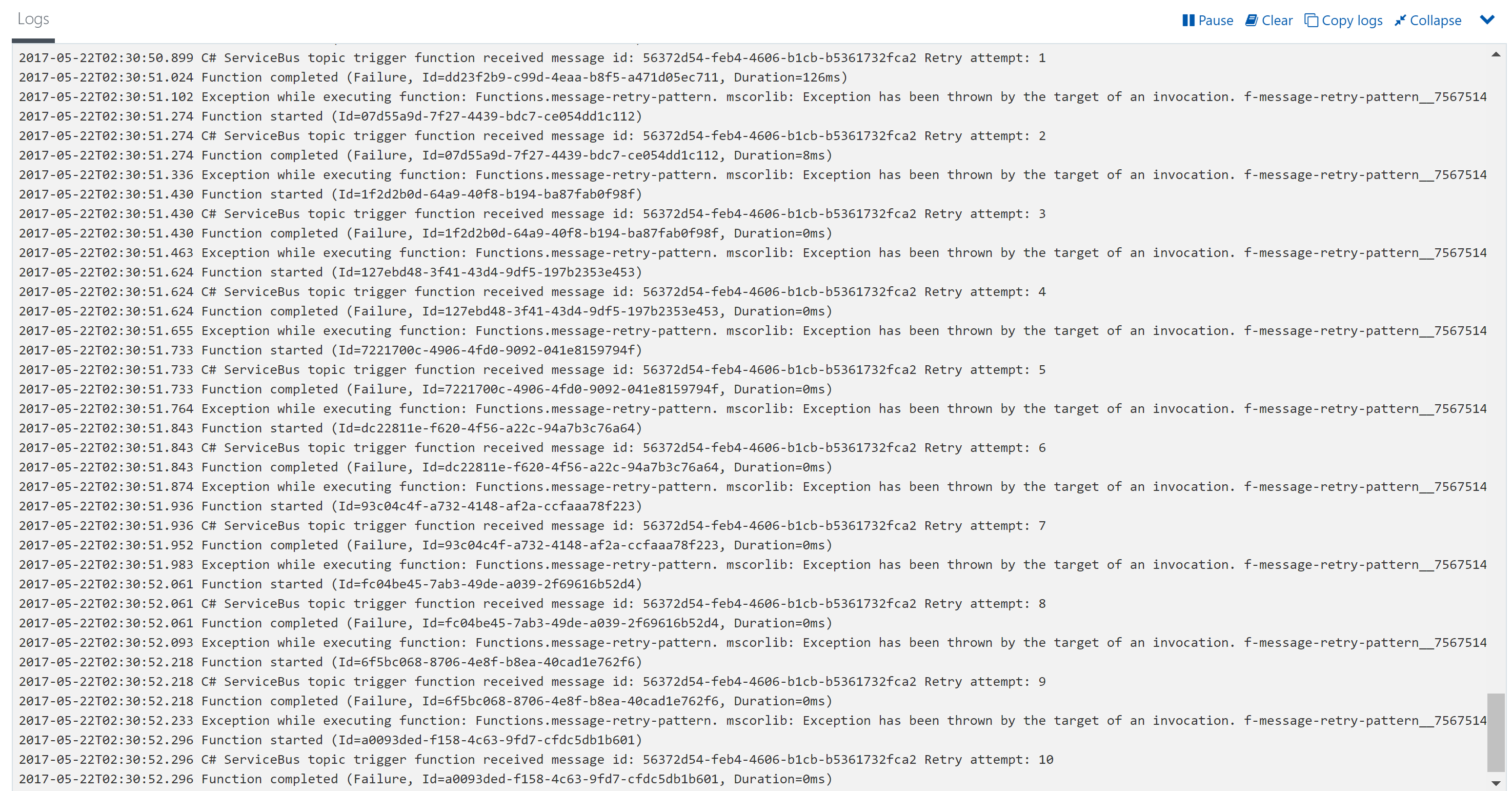

From the logs we see the message was retried 10 times until the maximum number of deliveries was reached and ServiceBus expired the message, sending it to the dead letter queue. “Ah hah!”, I hear you say. Implement message retries by configuring the MaxDeliveryCount property on the SB queue or subscription. Well, that will work as a simple, static retry policy but quite often we need a more configurable, dynamic approach. One based on the message context or type of exception caught by the processing logic.

Typical use cases include handling business errors (e.g. message validation errors, downstream processing errors etc.) vs transport errors (e.g. downstream service unavailable, request timeouts etc.). When handling business errors we may elect not to retry the failed message and instead move it to the dead letter queue. When handling transport errors we may wish to treat transient failures (e.g. database connections) and protocol errors (e.g. 503 service unavailable) differently. Transient failures we may wish to retry a few times over a short period of time where-as protocol errors we might want to keep trying the service over an extended period.

To implement this capability we’ll create a shared function that determines the appropriate retry policy based on context of the exception thrown. It will then check if the number of retry attempts against the maximum defined by the policy. If retry attempts have been exceeded, the message is moved to the dead letter queue, else the function waits for the duration defined by the policy before throwing the original exception.

Let’s change our function to throw mock exceptions and call our retry handler function to implement message retry policy.

Now our function implements some basic exception handling and checks for the appropriate retry policy to use. Let’s test our retry polices work by throwing the different exceptions our policy supports

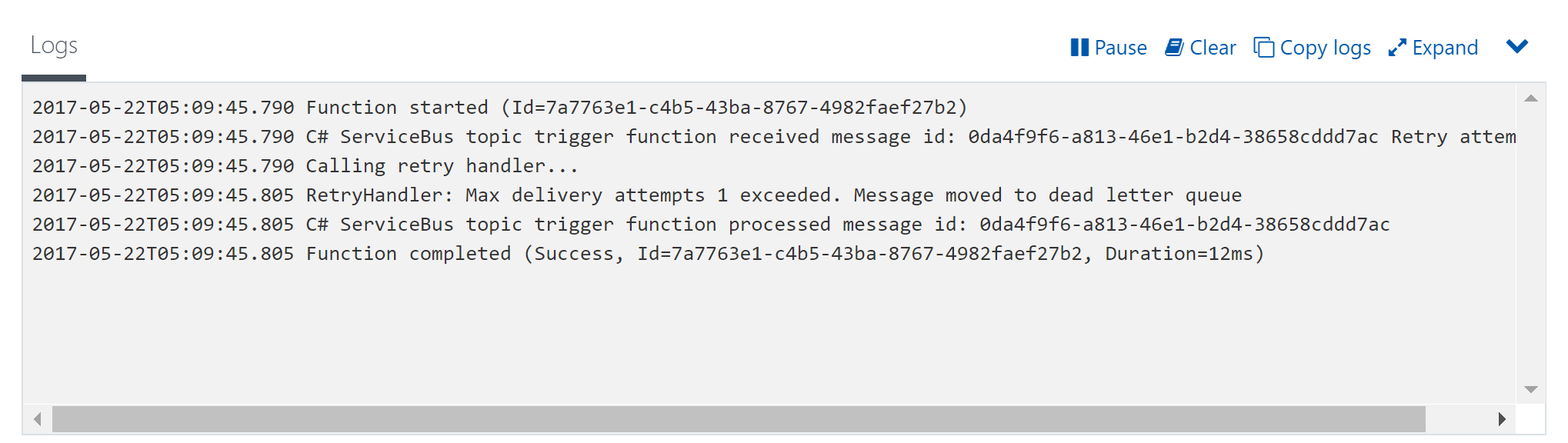

Testing throwing our mock business rules exception…

…we observe that the message is moved straight to the dead letter queue as per our defined policy.

Testing throwing our mock protocol exception…

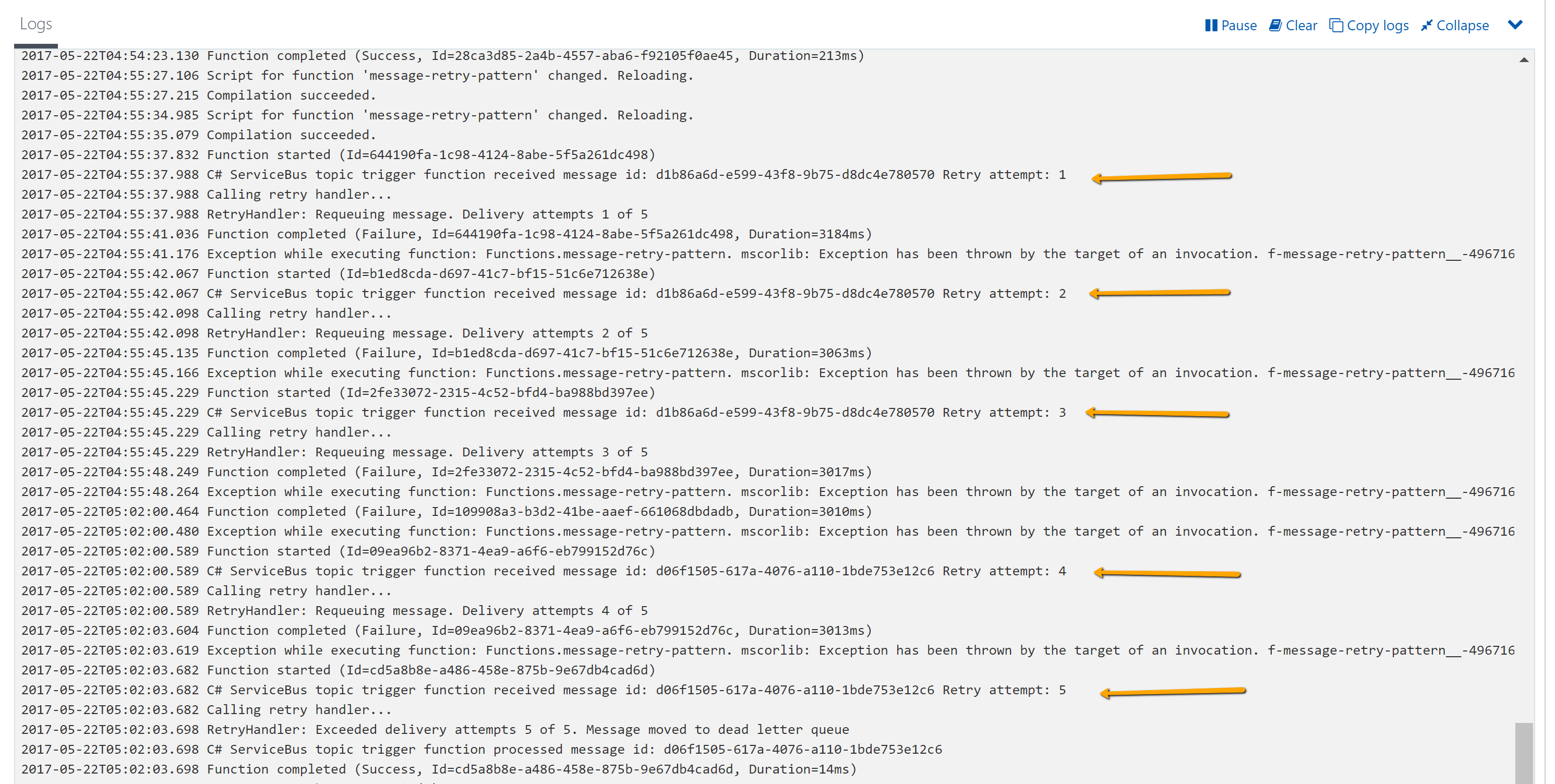

…we observe that we retry the message a total of 5 times, waiting 3 seconds between retries as per the defined policy for protocol errors.

Considerations

- Ensure your SB queues and subscriptions are defined with a MaxDeliveryCount greater than your maximum number of retries.

- Ensure your SB queues and subscriptions are defined with a TTL period greater than your maximum retry interval.

- Be aware that using Consumption based service plans have a maximum execution duration of 5 minutes. App Service Plans don’t have this constraint. However, ensure the functionTimeout setting in the host.json file is greater than your maximum retry interval.

- Also be aware that if you are using Consumption based plans you will still be charged for time spent waiting for the retry interval (thread sleep).

Conclusion

In this post we have explored the behaviour of the ServiceBus trigger binding within Azure Functions and how we can implement a dynamic message retry policy. As long as you are willing to manage the deserialization of message content yourself (rather than have Azure Functions do it for you) you can gain access to the BrokeredMessage class and implement feature rich messaging solutions on the Azure platform.

I see you using Azure service bus trigger in function to make it event driven. One drawback in event driven pattern is the replay capability. For e.g if Azure functions has an outage and if it is back after 10 minutes will it process the messages accumulated in service bus during this outage?

Hard to believe adding Thread.Sleep() at the end of the Azure Function is best-practice…

Hi Assaf,

If the exception is caused by a transient failure e.g. connection temporarily unavailable it makes sense to wait before retrying. Do you know of another preferable way?