Leveraging the Azure Functions Table Storage Output Binding with PowerShell

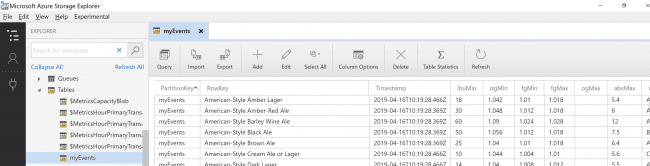

Recently I wrote this post on using PowerShell to bulk load data into Azure Table Service. Whilst this method works great it does rely on the AzureRM PowerShell module to provide the ability to batch ingest data into Azure Table Service.

I’m working on a solution that requires levels of automation to obtain data from events from Microsoft Graph and ingest that data into Azure Table Service. That doesn’t work with the AzureRM PowerShell Module.

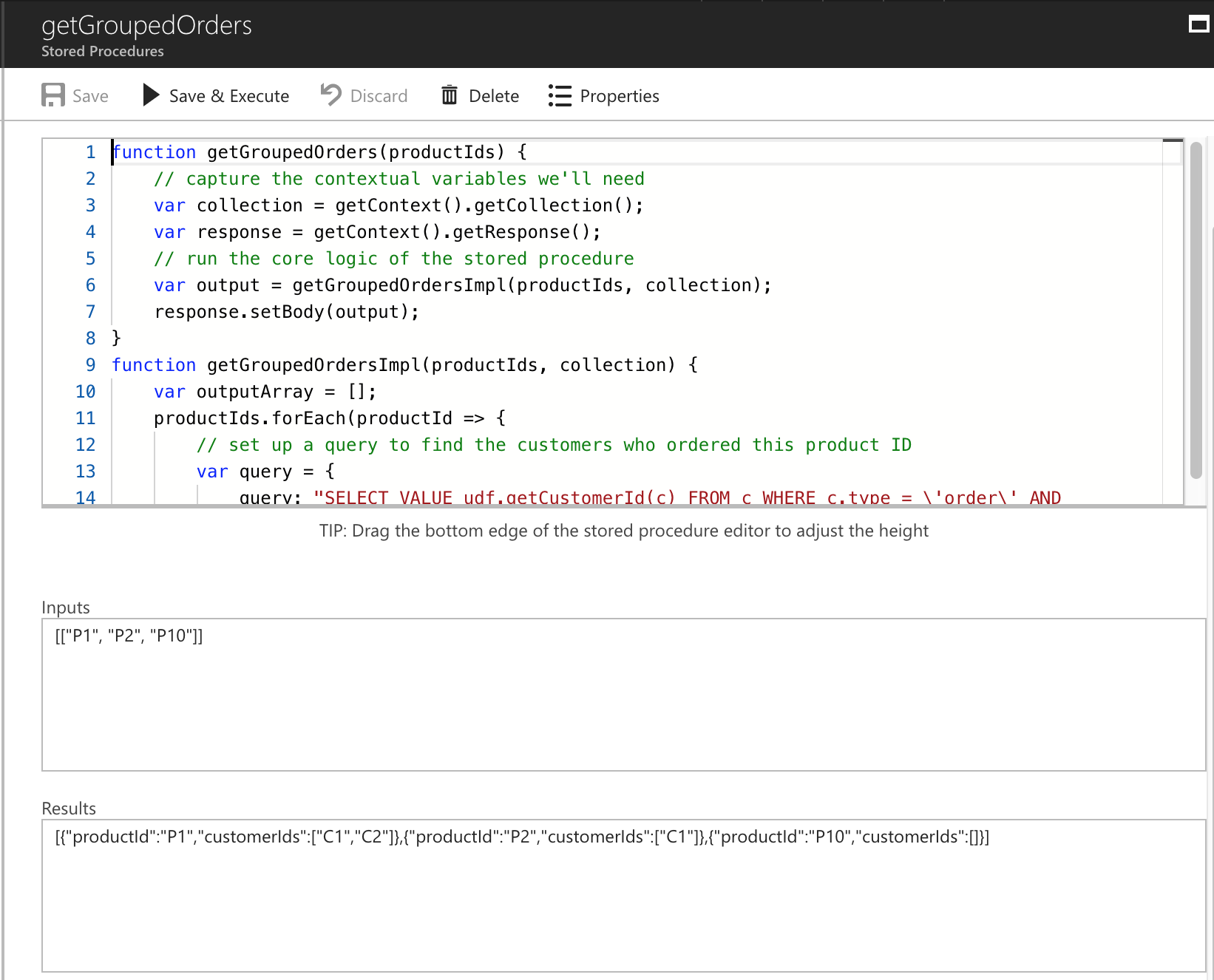

Azure Functions provide additional Bindings for Input and Output, but I’d never had the need to spend the time working it out how to output to Azure Table Storage (with PowerShell).… [Keep reading] “Leveraging the Azure Functions Table Storage Output Binding with PowerShell”