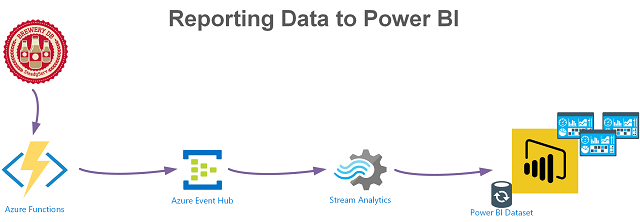

Outputting data from an Azure Function to Power BI with PowerShell

Last week I wrote this post that detailed how to use the Azure Table Storage output binding in an Azure PowerShell Function. As part of the same solution I’m working on, I also need to get data/events into Power BI for reporting dashboards. An Azure Function (PowerShell) has the ability to obtain the data but the path to Power BI requires a number of steps that start with using the Azure Function Event Hub output binding.… [Keep reading] “Outputting data from an Azure Function to Power BI with PowerShell”