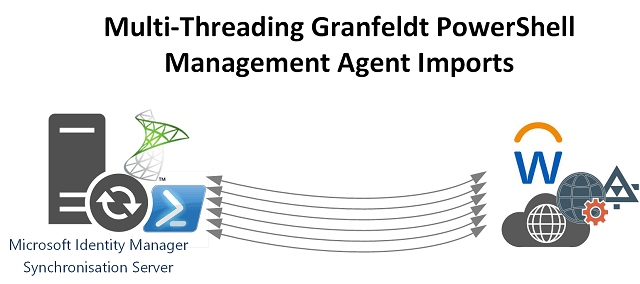

Multi-Threading Granfeldt PowerShell Management Agent Imports

As I’m sure you are familiar (with my many posts on the topic), the Granfeldt PowerShell Management Agent is extremely flexible. When used to integrate Microsoft Identity Manager with modern REST API’s it is easy to retrieve pages of results from a REST API and process the objects through the Management Agent. However sometimes you need to integrate Microsoft Identity Manager with an API (e.g. a SOAP WebService) that doesn’t provide functionality to page results.… [Keep reading] “Multi-Threading Granfeldt PowerShell Management Agent Imports”