Come Together: The BA and UX Unicorns

Welcome to 2020. Yes indeed, we’re at the very top of the new year, where going back to work as a consultant, with bright ideas and an invigorated sense of purpose, may seem challenging when we’re met with the ongoing challenges from pre-existing engagements prior to the holiday break. How can we negate these habitual thoughts of ‘business-as-usual’ and look upon our work moving forward with fresh eyes and perspective? Arguably there is no better time to do this than at the top of the year.… [Keep reading] “Come Together: The BA and UX Unicorns”

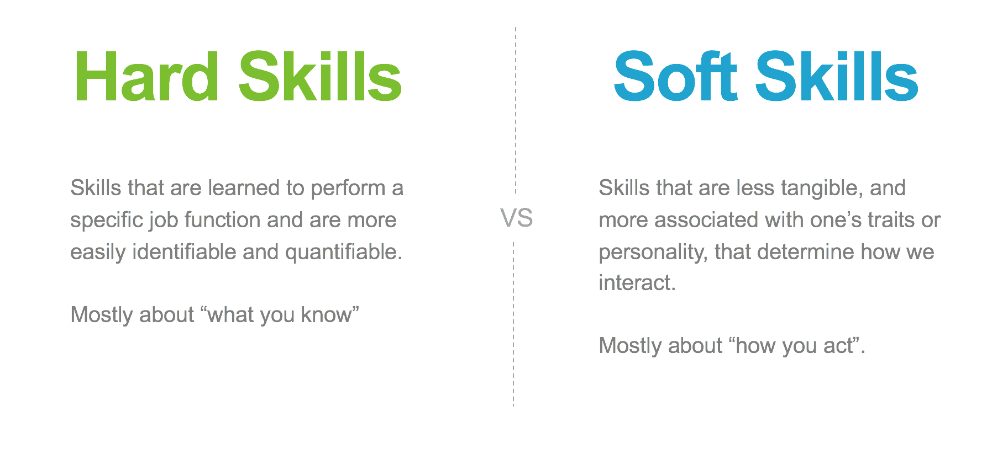

I aim to help people develop their soft skills. They are typically harder to define and require more attention. Below are concepts I work on developing every day and hopefully you can take some away and start developing them for yourself.

I aim to help people develop their soft skills. They are typically harder to define and require more attention. Below are concepts I work on developing every day and hopefully you can take some away and start developing them for yourself.