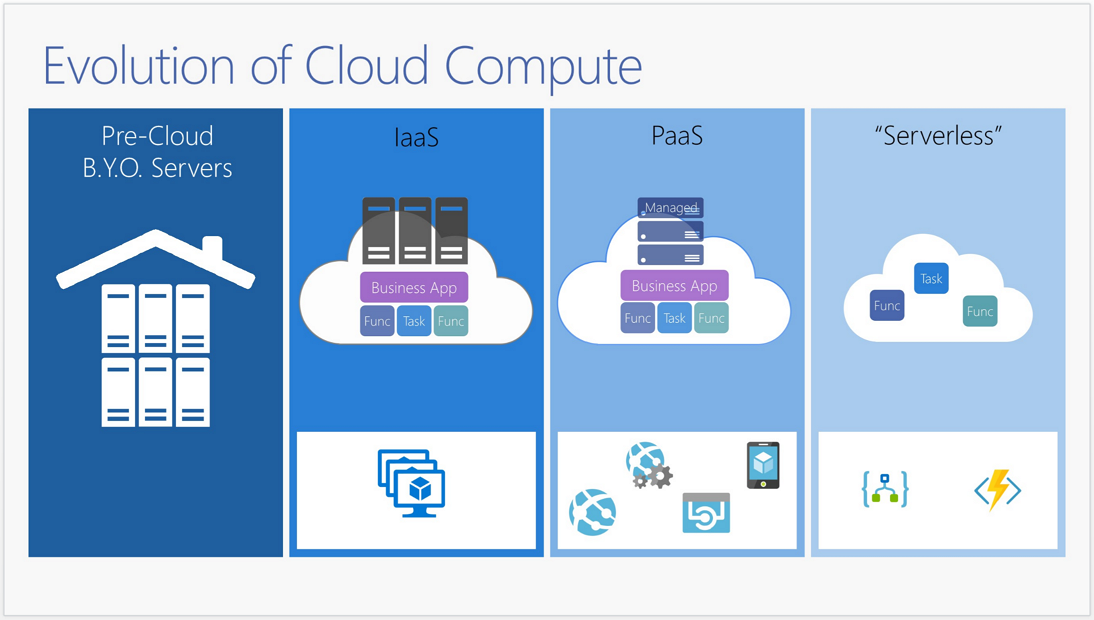

An organisation used to invest their IT infrastructure mostly for computers, network or data centre. Over time, they spent their budget for hosting spaces. Nowadays, in cloud environments, they mostly spend their funds to purchase computing power. Here’s a simple diagram about the cloud computing evolution. From left to right, expenditure shifts from infrastructure to computing power.

In the cloud environment, when we need resources, we just create and use them, and when we don’t need them any longer, we just delete them. But let’s think about this. If your organisation runs dev, test and production environment on cloud, the cost of resources running on dev or test environment is likely to be overlooked unless carefully monitored. In this case, your organisation might be receiving an invoice with massive amount of cost! That has to be avoided. In this post, we are going to have a look at the Azure Billing API that was released in preview and build a simple application to monitor costs in an effective way.

The sample codes used for this post can be found here.

Azure Billing API Structure

There are two distinctive APIs for Azure Billing – one is Usage API and the other is Rate Card API. Therefore, we can calculate how much we spent during a particular period.

Usage API

This API is based on a subscription. Within a subscription, we can send a request to calculate how much resources we used in a specified period. Here are the parameters we can use for these requests.

ReportedStartTime: Starting date/time reported in the billing system.ReportedEndTime: Ending date/time reported in the billing system.Granularity: EitherDailyorHourly.Hourlycan return more detailed result but takes far longer time to get the result.Details: Eithertrueorfalse. This determines how usage is split into instance level or not. Iffalseis selected, all same instance types are aggregated.

Here’s an interesting point on the term Reported. When we USE cloud resources, that can be interpreted from two different perspectives. The term, USE, might mean that the resources were actually used at the specified date/time, or the resource used events were reported to the billing system at the specified date/time. This happens because Azure is basically a distributed system scattered all around the world, and based on the data centre the resources are situated, the actual usage date/time can be reported to the billing system in a delayed manner. Therefore, even though we send requests based on the reported date/time, the responses containing usage data show the actual usage date/time.

Rate Card API

When you open a new Azure subscription, you might have noticed a code looking like MS-AZR-****P. Have you seen that code before? This is called Offer Durable ID and, based on this, different rates on resources apply. Please refer to this page to see more details about various types of offers. In order to send requests for this, we can use the following query parameters.

OfferDurableId: This is the offer Id. eg) MS-AZR-0017P (EA Subscription)Currency: Currency that you want to look for. eg) AUDLocale: Locale of your search region. eg) en-AURegion: Two-letter ISO country code that you purchased this offer. eg) AU

Therefore, in order to calculate the actual spending, we need to combine these two API responses. Fortunately, there’s a good NuGet library called CodeHollow.AzureBillingApi. So we just use it to figure out Azure resource consumption costs.

Scenario

Kloud, as a cloud consulting firm, offers all consultants access to the company’s subscription without restriction so that they can create resources to develop/test scenarios for their clients. However, once resources are created, there’s high chance that those resources are not destroyed in a timely manner, which brings about unnecessary cost spending. Therefore, management team has made a decision to perform cost control by resource groups 1) assigning resource group owners, 2) setting total spend limit, and 3) setting daily spend limit, using tags. By virtue of these tags, resource group owners are notified via email when cost approaches 90% of the total spend limit, and when it reaches the total spend limit. They also get notified if the cost exceeds the daily spend limit so they can take appropriate actions for their resource groups.

Sounds simple, right? Let’s code it!

When the application is written, it should be run daily to aggregate all costs, store it to database, and send notifications to resource group owners that meets the conditions above.

Writing Common Libraries

The common libraries consist of three parts. Firstly, it calls Azure Billing API, and aggregates data by date and resource group. Secondly, it stores those aggregated data into database. Finally, it sends notification to resource group owners who have resource groups that exceeds either total spend limit or daily spend limit.

Azure Billing API Call & Data Aggregation

CodeHollow.AzureBillingApi can reduce huge amount of API calling work. Its simple implementation might look like:

First of all, like the code above, we need to fetch all resource usage/cost data then, like below, those data needs to be grouped by dates and resource groups.

We now have all cost related data per resource group. We then need to fetch tag values from resource groups using another API call and merge it with the data previously populated.

We can look up all resource groups in a given subscription like above, and merge this result with the cost data that we previously found, like below.

Date Storage

This is the simplest part. Just use Entity Framework and store data into the database.

We’ve so far implemented data aggregation part.

Notification

First of all, we need to fetch resource groups that meet conditions, which is not that hard to write.

The code above is self-explanatory: it only returns resource groups that 1) approach the total spend limit or 2) exceed the total spend limit, or 3) exceed the daily spend limit. It works well, even though it looks smelly.

The following code bits show how to send notifications to the resource group owners.

It only writes alarms onto the screen, but we can implement SendGrid for email notification or Twillio for SMS alert, in here.

Now we’ve got the basic application structure. How can we execute it, by the way? We might have two approaches – Azure WebJobs and Azure Functions. Let’s move on.

Monitoring Application on Azure WebJob

A console application might be the simplest way for this purpose. Once the console app is built, it can be deployed to an Azure WebJob straight away. Here’s the simple console application code.

Aggregator service collects and store data and Reminder service sends alerts to resource group owners. In order to deploy this to Azure WebJob, we need to create two extra files, run.cmd and settings.job.

settings.job: It contains CRON expression for scheduled job. For example, if this WebJob runs every night at 00:20, the JSON object might look like:

run.cmd: When this WebJob is run, it always looks uprun.cmdfirst, which is a simple batch command file. Therefore, if necessary, we can enter the actual executable command with appropriate arguments into this file.

That’s how we can use Azure WebJob for monitoring.

Monitoring Application on Azure Function

We can use Azure Functions instead. But in this case we HAVE TO make sure:

Azure Functions instance MUST be with App Service Plan, NOT Consumption Plan

Basically, this app runs for 1-2 minutes at the shortest or 30-40 minutes at the longest. This execution time is not affordable for Consumption Plan, which charges costs based on execution time. On the other hand, as we have already paid for App Service Plan, we don’t need to pay extra for the Function instance, if we create it under the App Service Plan.

Timer Trigger Function code suits our purpose. Also using Precompiled Azure Functions approach would be more helpful and the function code might look like:

Here’s the function.json for this Timer Trigger one:

Here we have shown how to quickly write a simple application for cost monitoring, using the Azure Billing API. Cloud resources can certainly be used effectively and efficiently, but the flipside of it is, of course, that we have to be very careful not to be wasteful. Therefore, implementing a monitoring application would help in preventing unwanted cost leak.