Performing OCR with Azure Cognitive Services and HTML5 Media Capture API

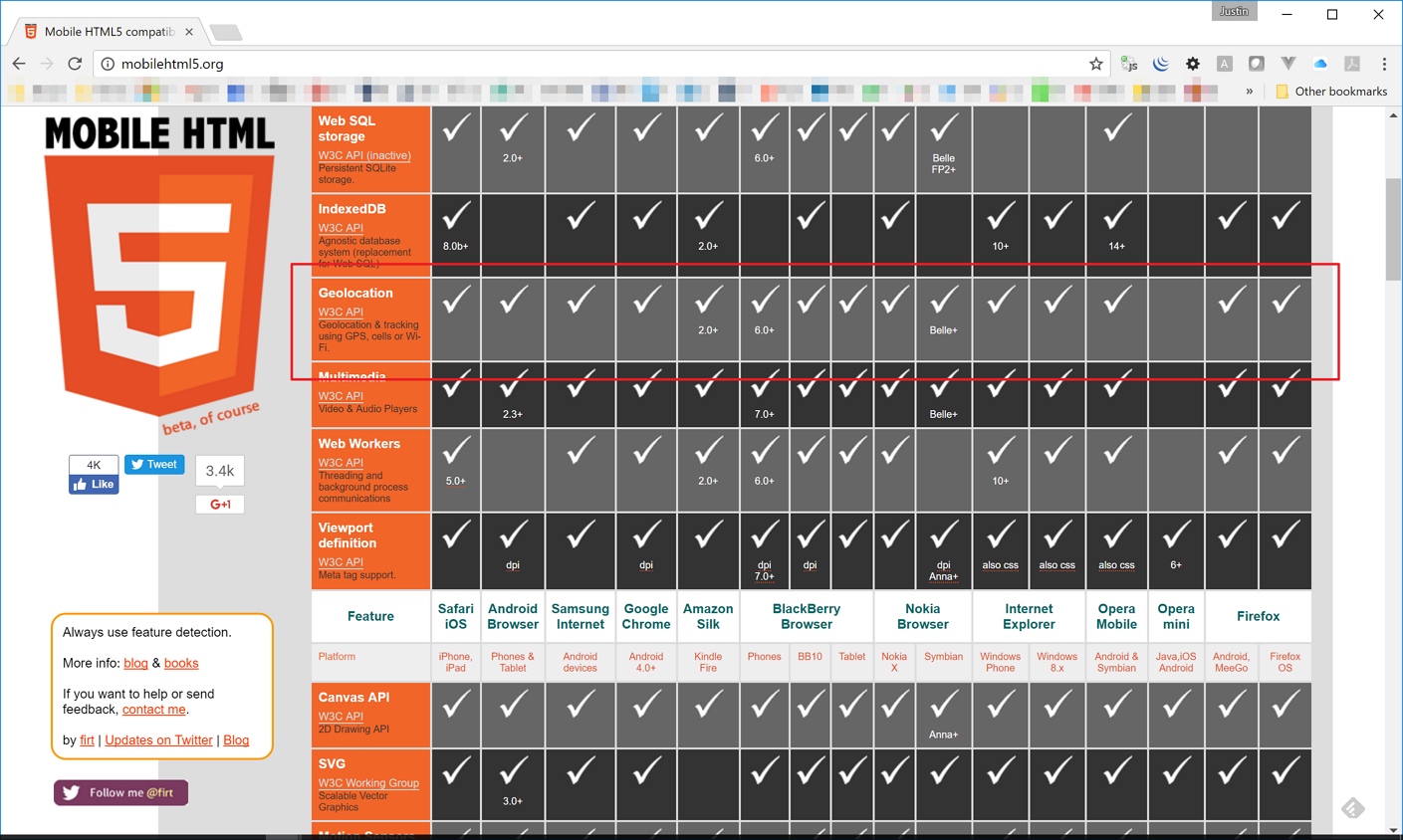

There are a few ways to access camera on mobile devices during application development. In our previous post, we used the getUserMedia API for camera access. Unfortunately, as of this writing, not all browsers support this API, so we should provide a fallback approach. On the other hand, HTML5 Media Capture API is backed by almost of all modern browsers, which we can utilise with ease. In this post, we’re going to use Vue.js, TypeScript and ASP.NET… [Keep reading] “Performing OCR with Azure Cognitive Services and HTML5 Media Capture API”