Auditing Azure AD Registered Applications

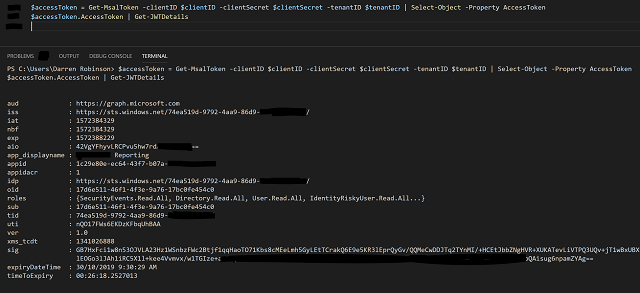

Azure AD Registered Applications are the Azure AD version of Active Directory Service Accounts. Over time, the number of them grow and grow, each having permissions to consume information from Azure AD and or Microsoft Graph. As an Administrator of Azure AD there is maintenance associated with these Registered Applications, namely credential validity and more important application validity.

Credential expiration associated with Azure AD Registered Applications is quickly visible via the Azure Portal. We can quickly see Current, Expired and Expiring Soon credentials as shown in the screenshot below.… [Keep reading] “Auditing Azure AD Registered Applications”