Access Azure linked templates from a private repository

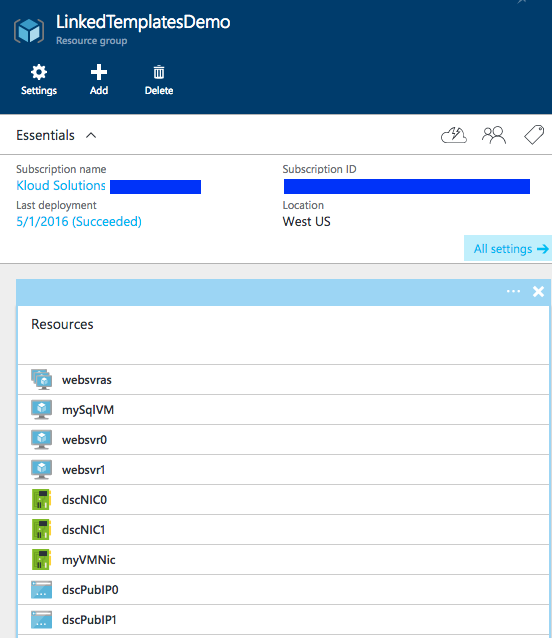

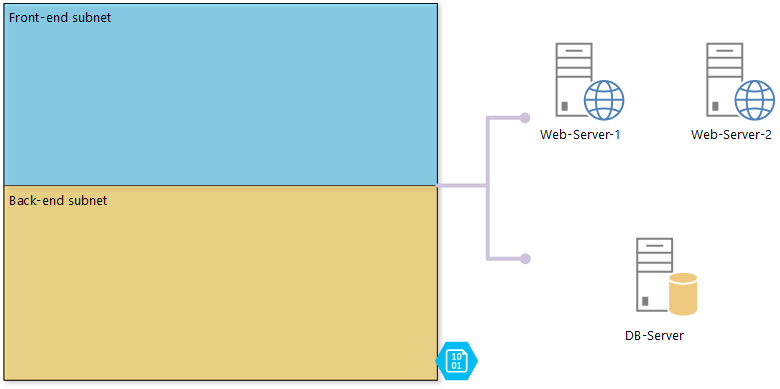

I recently was tasked to propose a way to use linked templates, especially how to refer to templates stored in a private repository. The Azure Resource Manager (ARM) engine accepts a URI to access and deploy linked templates, hence the URI must be accessible by ARM. If you store your templates in a public repository, ARM can access them fine, but what if you use a private repository? This post will show you how.

In this example, I use Bitbucket – a Git-based source control product by Atlassian. … [Keep reading] “Access Azure linked templates from a private repository”