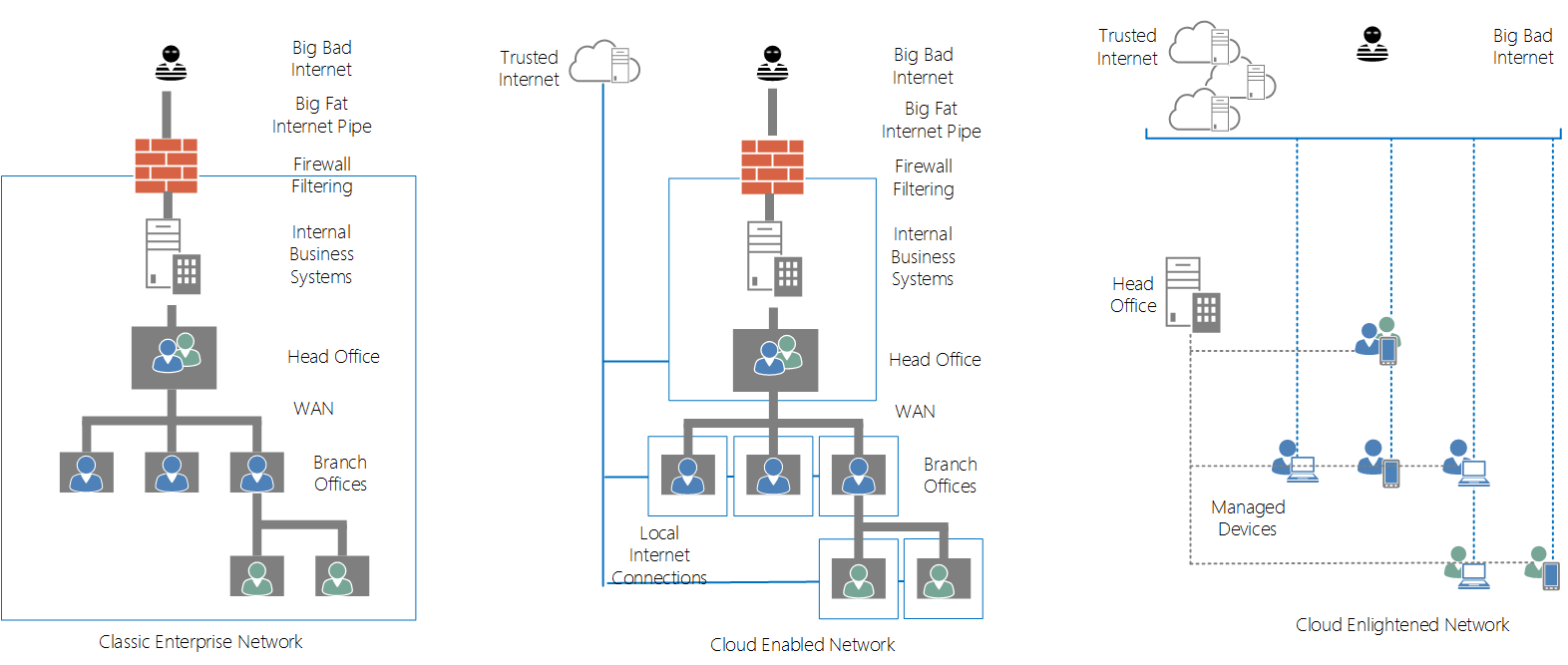

This intro is unashamedly lifted from a Microsoft article but I couldn’t say it any better: “The cloud has enormous potential to reduce operational expenses and achieve new levels of scale, but moving workloads away from the people who depend on them can increase networking costs and hurt productivity. Users expect high performance and don’t care where their applications and data are hosted” Cloud is a journey, to get there takes more than just migrating your workloads to the cloud. In most organisations architecture, infrastructure and networking have grown around the assumption that business systems are internal and the internet is external. Big WAN connections to offices and well protected interfaces to the Internet is the default networking solution to that situation.

Who moved my Cheese?

So what happens when business systems move to the Internet? The need for branch offices to communicate with head office diminishes but the need to communicate efficiently with the Internet becomes more important. The paradox of this situation is employees are likely to be much better served by their own internet connection when accessing the cloud based business services than by a filtered, sanitised and latent connection provided by work. Cloud nirvana is reached when there are no internal business systems, WAN connections are replaced by managed devices and independent internet connections attached to employees. In fact a true end state for cloud and BYOD may be that device and connectivity are deferred to the employee rather than a service provided by the company.

So with that end state in mind its worth considering the situation most business find themselves in today. “Cloud services are upon us but we have a classic enterprise network”. They may have migrated to Office 365 for Internet and Email but still have a big centralised internet connection, heavy dependency on WAN links and treat the whole Internet, including the new migrated Email and SharePoint services as one big untrusted Internet. It is an interesting problem. Cloud services (like Office 365) relieve the organisation of the concerns of delivering the service but that is matched equally by a loss of control and ownership which affects the way the service can be delivered to internal employees. On using the cloud service a secure tunnel is set up between the cloud service provider and the employee which disintermediates the employer who is paying the bills! The cloud service provider owns the ip addresses, the URL and the security certificate used to encrypt the traffic which is a significant change from the highly flexible on-premises model where the organisation controlled everything. The underlying problem here is that the SSL used to encrypt traffic between service provider and a single user is not flexible enough to support the new 3 way trust relationship that occurs when an organisation uses a cloud provider to deliver services to employees.

Take back the streets

All is not lost. Just because you’ve accepted cloud into your life doesn’t mean you have to totally submit to it. Cloud service providers do a great job using caching services such as Akamai to drop content right at the organisational door step, but the ability to efficiently transport that data through internal networks to the employee is now harder. In the following blog we’ll look at a strategy for taking back ownership of the traffic and deploying an efficient and cheap internal CDN network to serve content to branch offices with no direct internet connection. While the example is centered on Office 365 SharePoint Online, it is equally applicable to any cloud based service. There are commercial solutions to the problem by vendors like Riverbed and CISCO who will, by dropping an expensive device on your network, “accelerate” your Office 365 experience for internal employees. But can you do that with out-of-the-box software and commodity hardware? Yes you can. To build our own Cloud Accelerator we need the following:

- 1 Windows Server (can be deployed internally or externally)

- Access to makecert.exe through one of the Windows development kits

- 1 Office 365 SharePoint Online Service (tenant.sharepoint.com) and an account to test with

Application Publisher

By using the Fiddler tool when browsing to your cloud application will reveal where the content is actually coming from. For our SharePoint Online site it looks something like this. https://gist.github.com/petermreid/8d2567b4703daff35ae2 Note that the content is actually coming from a mixture of the SharePoint Online server and a set of CDN URLs (cdn.sharepointonline.com and prod.msocdn.com). That is because Microsoft make heavy use of caching services like Akamai to offload much of the content serving and deliver it close to the organizational internet links as possible. The other thing to notice is the size. In fact the total size of the home page of our Intranet (without any caching) is a whopping 1MB which may be a significant burden on internet network links if not cached adequately across users. But to be able to do that we need to step in the middle to see the traffic and what can be cached on intermediate servers. Microsoft have a trusted certificate authority and can create secure certificates for *.sharepoint.com and *.sharepointonline.com which enables them to encrypt the traffic for your tenant’s Office 365 traffic. When you browse to Office 365 SharePoint Online from your browser an encrypted tunnel is set up between the browser and Microsoft but we can take back ownership of the traffic if we need to break the secure tunnel and republish the traffic using internal application publication.

To do this we need to create our own certificate authority and deliver our own tenant.sharepoint.com and cdn.sharepointonline.com certificates and tell our users to trust those certificates by putting the new root certificate in their trusted root certificate store. This is no different to the trick that corporate proxy servers use to enable content filtering of your secure browser traffic. But here we don’t need to sniff all traffic, just the traffic that we already own coming from Office 365 which means we can pre create the certificates rather than generate them on the fly.

Trust Me

The first step is to create a new set of certificates that represent the trust between organization and employee. There’s a great article describing the process here but we’ll run through the steps here (replace the work tenant for your SharePoint Online tenant)

- First find your copy of makecert.exe, mine is here C:\Program Files (x86)\Windows Kits\8.1\bin\x64.

- Create a new certificate authority (or you can use one that your organisation already has). Mine will be called “Petes Root Authority” and it is from this root that we will build the new domain specific certificates.

Then use the certificate authority (stored in CA.pvk and CA.cer) to create a new certificate for tenant.sharepointonline.com

And one for the CDN domain cdn.sharepointonline.com https://gist.github.com/petermreid/4a2bee1b3b90dfef39d0

Following those instructions you should end up with a Certificate Authority and a server certificate for each of tenant.sharepoint.com and cdn.sharepointonline.com which we will install on our Office 365 Application Publisher. This is classic man-in-the-middle attack, where the man is the middle is you!

Application Publication

To publish the cloud service internally we will use a Windows Server and Internet Information Services (IIS) equipped with Application Request Routing (ARR). Normally this set up is used to publish content to the Internet but we will use it the other way around, to publish the Internet internally. The server can be anywhere, in the cloud or on-prem or even in a branch office but it just needs to have the opportunity to intercept our browsing traffic.

IIS Install

In the Server Manager->Local Server

- IE Enhanced Security Configuration set to Off for Administrators

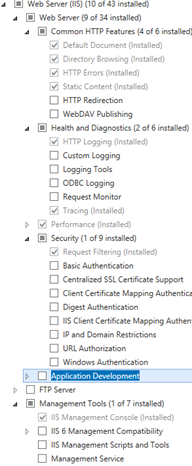

- Manage->Add Roles and features->Role based->Add the Web Server(IIS) role.

- Click through the “Features”

- Click “Web Server Role”

- Click on “Role Services”

- Click Health and Diagnostics->Tracing (used to debug issues)

- Click Install

- Close the dialog when complete

Certificates

Install the certificates we created.

-

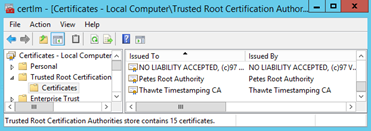

Install the CA into Trusted Root Certificate Authorities

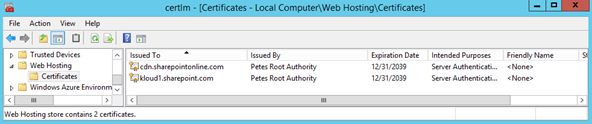

- And the server certificates into the WebHosting store by double clicking on each .pfx file,

- choosing Store Location ->Local Machine and

- Place all certificates in the following store ->Web Hosting

Click Start and type “Manage Computer Certificates” and choose the Web Hosting branch to see the installed certificates

Web Platform Installer

- Click Start and type IIS Admin and enter

- Click on the machine name

- Accept the dialog to install the Web Platform Installer and follow steps to download, run and install

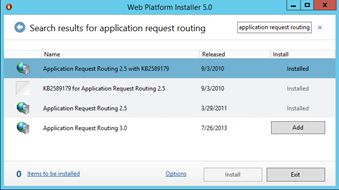

- Once installed, Click products and search for “Application Request Routing”

- Then use the platform installer to install “Application Request Routing 2.5 with KB2589179”

- Accept the Pre-requisites install and click Install and then Finish

- Close IIS Admin and reopen using Start->IIS admin

Application Request Routing

- Click on the top level server name and double click on “Application Request Routing Cache” icon

- Under “Drive Management” select Add Drive and choose a new empty drive to store content in

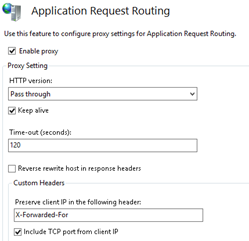

- Under “Server Proxy Settings” check the “Enable proxy” box

- Clear the check box “Reverse rewrite host in respose headers” (we just want it to pass content right through without any modification)

- and click “Apply”

Web Site

- Click on the “Default Web Site” choose “Explore” and deploy the following web.config to that directory.

- This config file contains all the rules required to configure Application Request Routing and URL Rewrite to forward the incoming requests to the site and to send the responses back to the requestor.

- There is an additional filter (locked down to sharepoint and cdn) to ensure the router doesn’t become an “open proxy” which can be used to mask attacks on public web sites.

Site Binding

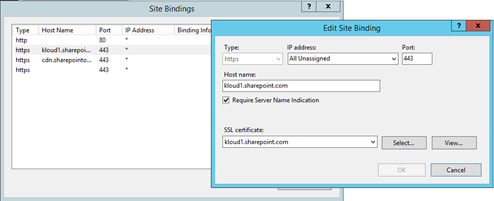

The default site will listen for HTTP requests on port :80, additional bindings are required to serve the https traffic.

- In IIS Admin Click Bindings…Add Site Binding

- Choose https and add bindings for all the certificates we created earlier (make sure to select Require Server Name Indication and type the corresponding host name so IIS knows which certificate to use for which request)

URL Rewrite

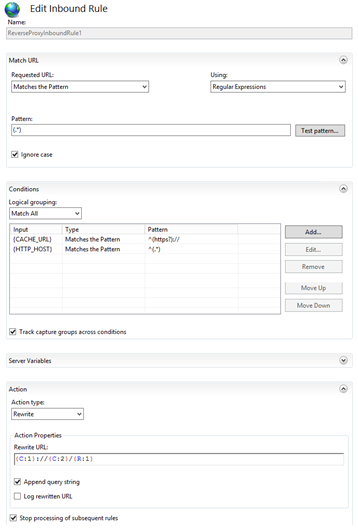

Just one simple rule drives it all. All it does is takes any incoming request and re-requests it! (Note browsing direct to the website will cause an infinite loop of requests which IIS kindly breaks after about 20 requests) Double click the URL Rewrite icon in IIS to see the rule represented in the GUI…

Or the same as seen in web.config…

Network Configuration

DNS

So now all we have to do is change the mapping of the URL to point to our web server instead of Microsoft’s one. If this is an organisation with an internal DNS then it is easy to repoint the required entries. Otherwise, and for testing we can use a local DNS override, the “hosts” file (this file is located at [Windows]\System32\drivers\etc) by adding the following entries. ipaddress.of.your.apppublisher tenant.sharepoint.com ipaddress.of.your.apppublisher cdn.sharepointonline.com (Note if you’ve done this using an Azure server then the ip address will be the address of your “Cloud Service” ) Now when you browse to tenant.sharepoint.com the traffic is actually coming via our new Application Publisher web site but the content is the same! If you want to check if the content is actually coming through the router, have a look at the content headers (using Fiddler) since ARR inserts an additional header X-Powered-By: ARR/2.5

But remember that any user who intends to use this accelerator to receive content must have the root certificate installed in their “Trusted Root Certificate Authorities). By installing that root the user is acknowledging that they trust the acceleration server to intercept traffic on their behalf.

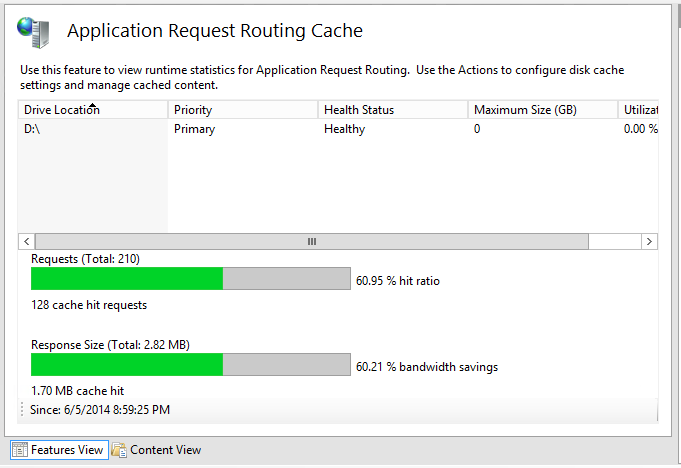

Accelerate

So, that’s an awful lot of effort to get the same content on the same URL. But there is something subtly different, we now “own” the traffic and we can introduce speed, bandwidth and latency improving strategies. Out of the box the Application Request Routing module we used implements caching which will honour the cache control headers on the requests to provide an intermediate shared cache across your users of the service. Simply click on the top level web site, choose Application Request Routing Cache, Add a Drive that it can use for caching and it will immediately start delivering content from the cache (where it can) instead of going back to the source thereby providing immediate performance improvements and bandwidth savings.  Now we “Own” the traffic there are many other smarter ways to deliver the content to users especially where they are isolated in branch offices and low bandwidth locations. I’ll be investigating some of those in the next blog which will turn our internal content delivery network up to 11!

Now we “Own” the traffic there are many other smarter ways to deliver the content to users especially where they are isolated in branch offices and low bandwidth locations. I’ll be investigating some of those in the next blog which will turn our internal content delivery network up to 11!

I have tried it, but it is not working, when i try browsing SharePoint Online it returns with Web Page Not found.? HTTP 400