I’ve blogged a bit in the past about the unique challenges encountered when moving to the cloud and the unavoidable consequence of introducing new network hops when moving workloads out of the data centre. I’m currently working for a unique organisation in the mining industry who are quite aggressively pursuing cost saving initiatives and have seen cloud as one of the potential savings. The uniqueness of the IT operating environment comes from the dispersed and challenging “branch offices” which may lie at the end of a long dedicated wired, microwave or satellite link

Centralising IT services to a data centre in Singapore is all very well if you’re office is on a well serviced broadband Internet link but what of these other data centres with more challenged connectivity. They have until now been well served by the Exchange server, file server or application server in a local rack and quite rightly want to know “what is the experience going to be like for my users if this server is at the other end of a long wire across the Pilbara.

Moving application workloads to best serve these challenging end user scenarios depends heavily on the application architecture. The mining industry, more so than other industries have a challenging mix of file based, database, and high end graphical applications that might not tolerate having tiers of the application spanning a challenging network link. To give the equivalent end user experience, do we move all tiers of the application and RDP the user interface, move the application server and deliver http, move just the database or files and leave the application on site?

Answering these questions is not easy, and depends on hard to measure qualitative user experience measures like scrolling, frame rate, opening times, report responsiveness and can only be determined once the workload has been moved out of the local data centre. What we need is a way to synthesise the application environment as if there are additional network challenges injected into the application stack without actually moving them.

What we need is a “Network Link Conditioner” available on Mac and UNIX environments but no Windows. Introducing NEWT or Network Emulator Toolkit for Windows. A product of the hardworking Microsoft Research team in Asia. And for such a powerful and important tool in demonstrating the effects of moving to cloud, it’s not so easy to find!

Installing

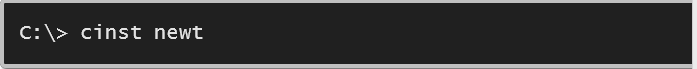

It’s been around for a while and there are a few odd web sites that still host copies of the application but the most official place I can find is in the new and oddly named Chocolatey gallery download site. Once you have Chocolatey installed it’s just a simple

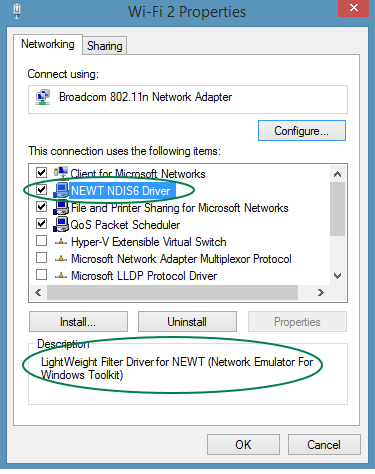

So what can it do? It can help to simulate various network challenges by using a special, configurable network adaptor inserted into the network stack. After you install the application on a machine, try opening “Network and Sharing Centre” and then “Change adapter settings” and then right click “Properties” on one of your Network Adapters and you should see this.

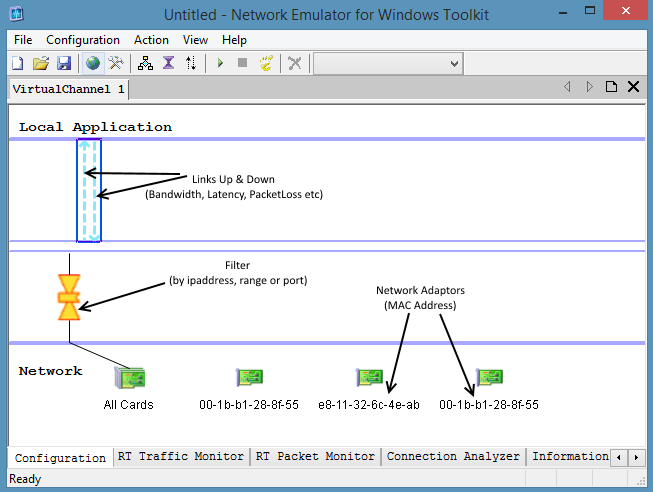

A lap around NEWT

The documentation that comes with NEWT is actually very good so I won’t recover it here. There is particularly detailed information on the inner workings of the network pipeline and the effect of configuration options on it. Interestingly the NEWT user interface is just one of the interfaces as it also supports a .NET and COM interface also for building your own custom solutions.

Running

- Add a Filter to one or All of your network adaptors and choose the ip addresses and or ports you want to affect

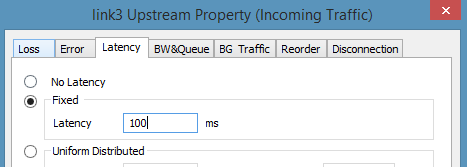

- Add up and/or down Links and set the desired effect on bandwidth restriction, additional latency, packet loss and reordering.

- Try Action->Toggle Trace, Push Play and have a look at the trace windows to see the packets being sniffed

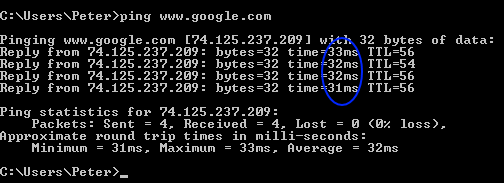

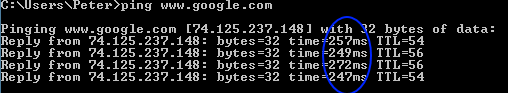

To quickly check if it is working as expected try this ping google and note the latency (32mS) and ip address (74.125.237.209), set a Filter with 100mS of up & down link latency, Run NEWT and ping again

There’s now an additional 200mS (100 up and 100 down plus some NEWT filter overhead) added to Google home page. And you will notice it if you open a browser window to Google and hit Refresh (to refresh the browser cache). If you want to go back in time, try using the pre created filters like the “Dialup56K” setting! So enough of messing around what about the serious stuff.

So here are a few scenarios I’ve used it in recently:

Throttling Email

Why: When moving from an in house hosted exchange environment to Office 365 Email, email mail box synchronisation traffic must pass through corporate Internet facing networking infrastructure. Outlook (with the use of Cached Exchange mode) is actually extremely good at dealing with the additional latency, but some customers may be concerned about the slower email download rates now that Exchange is not on the same network (don’t know why since Email is inherently an asynchronous messaging system). To demonstrate the effect of slower downloads:

- Download a file using the browser and note the download speed (this is to estimate the bandwidth available to an end user across the corporate Internet facing infrastructure)

- Determine the ip address of the corporate exchange server and add a NEWT Filter for that ipaddress

- Add a Downlink and limit the bandwidth to the previously noted download speed available to the browser (optionally also add the latency to the Office 365 data centre)

- Send a large email to your Inbox

Migration to SharePoint Online

Why: When you have an existing Intranet served internally to internal users it can be difficult to describe what the effect will be of moving that workload to SharePoint Online. There are certainly differences but browsers are remarkably good at hiding latency and tools like OneDrive become more important. Demonstrating these effects to a customer to ease concerns about moving offshore is easy with NEWT.

- Estimate the latency to the SharePoint Online data centre (Australia to Singapore is around 130mS each way)

- Add a NEWT Filter for the internal Intranet portal

- Add an Uplink and Downlink and add on your estimated Singapore latency

- Browse and walk through the Intranet site showing where latency has an effect and the tools that have been built to get around it.

And remember for web applications like this your other favourite tool, Fiddler still as expected works as it operates at a different layer of the network stack.

Migrating an Application Database to the Cloud

Why: There are many cases where an application is deployed to local machines but communicate to a central database which may be worth considering moving to a cloud based database for better scalability, performance, backup and redundancy. Applications that have been built to depend on databases can vary significantly in their usage of the database. Databases are extremely good at serving needy applications which has encouraged inefficient development practices and chatty database usage. For this reason database bound applications can vary significantly in their ability to tolerate being separated from their database. NEWT can help synthesis that environment to determine if you’ve got an application that is tolerant or intolerant to a database move to the cloud.

- Determine the connection string used by the application to connect to the database

- Add a NEWT Filter for the ipaddress of the database on the database connection port (1433)

- Inject as much latency as you need to simulate the distance between this desktop application and the intended location of the database.

Migrating an Application to the Cloud

Why: The question often comes up on lifting and shifting an entire application to the cloud along with all dependant tiers and present the application back to the user over RDP. Will an RDP interface be effective for this application?

- Set up two machines, one with the application user interface (with remote desktop services enabled) and one with remote desktop

- Inject the required latency using a NEWT filter on the application machine ipaddress and on the RDP port 3389.

- Allow the user to operate the application using the synthesised latent RDP interface

There are many other possible uses, including synthesising the effects of packet loss and reordering on video streaming and its use in demonstrating the effect of network challenges on Lync or other VOIP deployments is invaluable. In a corporate IT environment where a cloud migration strategy is being considered NEWT is an invaluable tool to help demonstrate the effects of moving workloads, make the right decisions about those workloads and to help determine those interfaces between systems and people that have , and have not been designed and built to tolerate a network split across a large and unpredictable network.