PnP Provisioning PowerShell, Site Scripts or CSOM scripts – which one to use and when?

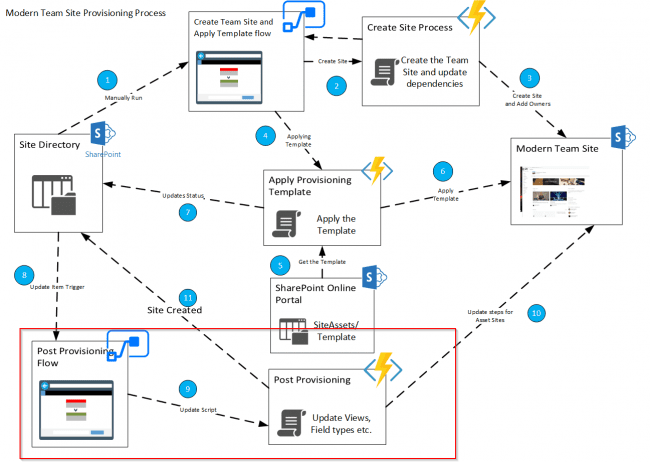

There are various approaches to plan and automate the process for Site creation and management of SharePoint Online Sites. In this blog we will look at these options and how to use with a best possible approach.

Pnp Provisioning PowerShell is a great way to automate creation of SharePoint assets through an xml or pnp template file using PowerShell. Similarly, Site scripts and site design allows us to create Site using JSON templates and also allows call to any Provisioning automation scripts or use a Template for custom implementation.… [Keep reading] “PnP Provisioning PowerShell, Site Scripts or CSOM scripts – which one to use and when?”