How to make cool modern SharePoint Intranets Part 1 – Strategize (scope & plan)

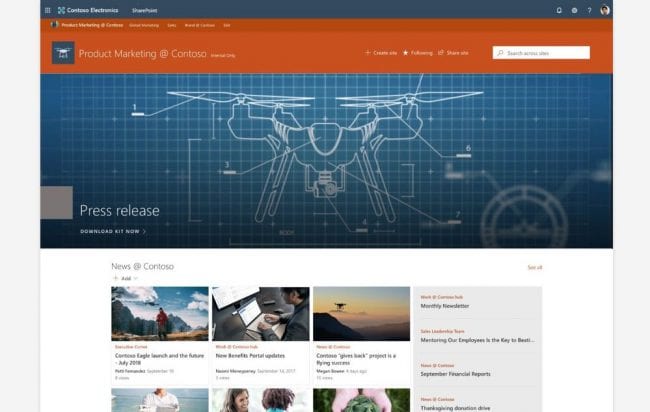

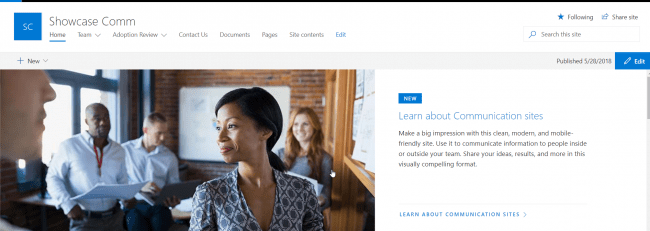

Over the last year, we have seen many great advancements with SharePoint communications sites that have bought it more closer of being an ideal Intranet. In this blog series, I will be discussing about some of my experiences for the last years in implementing Modern Intranet and best practices for the same.

In this specific blog, we will look at the strategies about the first block of building a great Intranet – Strategize the Intranet approach.… [Keep reading] “How to make cool modern SharePoint Intranets Part 1 – Strategize (scope & plan)”