Set your eyes on the Target!

So in my previous posts I’ve discussed a couple of key points in what I define as the basic principles of Identity and Access Management;

- Requirements

- Source

- The Vault

- And as a side topic the DEA

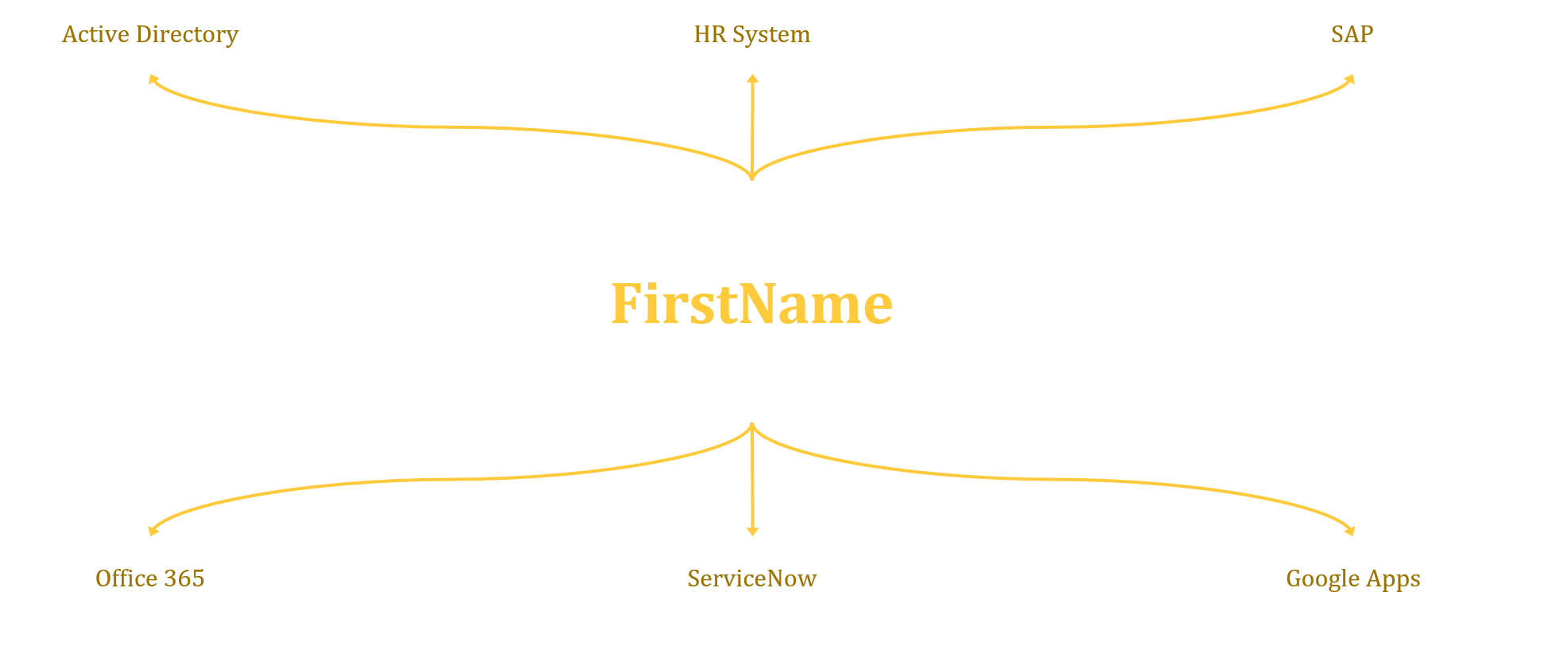

Now that we have all the information needed, we can start to look at your target systems. Now in the simplest terms this could be your local Active Directory (Authentication Domain), but this could be anything, and with the adoption of cloud services, often these target systems are what drives the need for robust IAM services.… [Keep reading] “Set your eyes on the Target!”

In this post I will talk about data (aka the source)! In IAM there’s really one simple concept that is often misunderstood or ignored. The data going out of any IAM solution is only as good as the data going in. This may seem simple enough but if not enough attention is paid to the data source and data quality then the results are going to be unfavourable at best and catastrophic at worst.

In this post I will talk about data (aka the source)! In IAM there’s really one simple concept that is often misunderstood or ignored. The data going out of any IAM solution is only as good as the data going in. This may seem simple enough but if not enough attention is paid to the data source and data quality then the results are going to be unfavourable at best and catastrophic at worst.