In the last post I introduced the idea of breaking the secure transport layer between cloud provider and employee with the intention to better deliver those services to employees using company provided infrastructure.

In short we deployed a server which re-presents the cloud secure urls using a new trusted certificate. This enables us to do some interesting things like provide centralised and shared caching across multiple users. The Application Request Routing (ARR) module is designed for delivering massively scalable content delivery networks to the Internet which when turned on its head can be used to deliver cloud service content efficiently to internal employees. So that’s a great solution where we have cacheable content like images, javascript, css, etc. But can we do any better?

Yes we can and it’s all possible because we now own the traffic and the servers delivering it. To test the theory I’ll be using a SharePoint Online home page which by itself is 140K and overall the total page size with all resources uncached is a whopping 1046K.

Compression

Surprisingly when you look at a Fiddler trace of a SharePoint Online page the main page content coming from the SharePoint servers is not compressed (The static content, however, is) and it is also marked as not cacheable (since it can change each request). That means we have a large page download occurring for every page which is particularly expensive if (as many organisations do) you have the Intranet home page as a default on the browser opening.

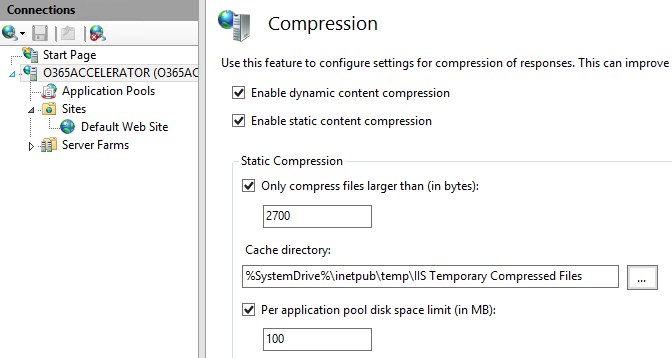

Since we are using Windows Server IIS to host the Application Request Router we get to take a free ride on some of the other modules that have been built for IIS like, for instance, compression. There are two types of compression available in IIS, static compression which can be used to pre-calculate the compressed output of static files, or dynamic compression which will compress the output of dynamically generated pages on the fly. This is the compression module we need to compress the home page on the way through our router.

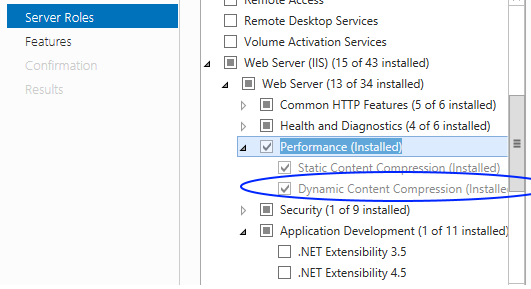

Install the Dynamic Compression component of the Web Server(IIS) role

Configuring compression is simple, firstly, make sure the IIS Server level has Dynamic Compression enabled and also the Default Web Site level

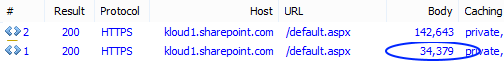

By enabling dynamic compression we are allowing the Cloud Accelerator to step in between server and client and inject gzip encoding on anything that isn’t already compressed. On our example home page the effect is to reduce the download content size from a whopping 142K down to 34K

We’ve added compression to uncompressed traffic which will help the experience for individuals on the end of low bandwidth links, but is there anything we can do to help the office workers?

BranchCache

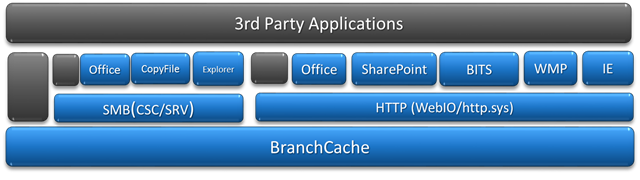

BranchCache is a Windows Server role and Windows service that has been around since Server 2008/Win 7 and despite being enormously powerful has largely slipped under the radar. BranchCache is a hosted or peer to peer file block sharing technology much like you might find behind torrent style file sharing networks. Yup that’s right, if you wanted to, you could build a huge file sharing network using out of the box Windows technology! But it can be used for good too.

BranchCache operates deep under the covers of Windows operating systems when communicating using one of the BranchCache-enabled protocols HTTP, SMB (file access), or BITS(Background Intelligent Transfer Service). When a user on a BranchCache enable device accesses files on a BranchCache enabled file server or accesses web content on a BranchCache enabled web server the hooks in the HTTP.SYS and SMB stacks kick in before transferring all the content from the server.

HTTP BranchCache

So how does it work with HTTP?

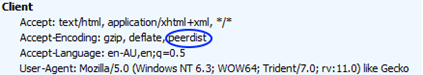

When a request is made from a BranchCache enabled client there is an extra header in the request Accept-Encoding: peerdist which signifies that this client not only accepts normal html responses but also accepts another form of response, content hashes.

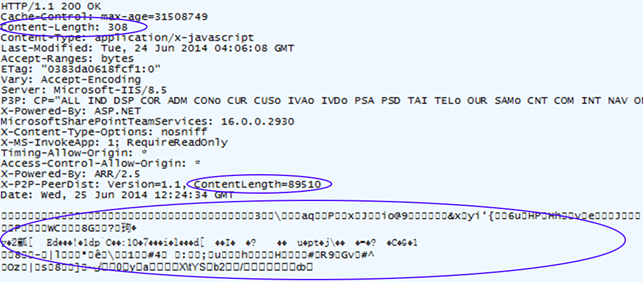

If the server has the BranchCache feature enabled it may respond with Content-Encoding: peerdist along with a set of hashes instead of the actual content. Here’s what a BranchCache response looks like:

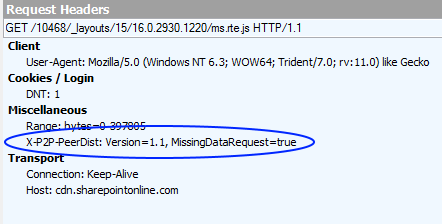

Note that if there was no BranchCache operating at the server a full response of 89510 bytes of javascript would have been returned by the server. Instead a response of just 308 bytes was returned which contains just a set of hashes. These hashes point to content that can then be requested from a local BranchCache or even broadcast out on the local subnet to see if any other BranchCache enabled clients or cache host servers have the actual content which corresponds to those hashes. If the content has been previously requested by one of the other BranchCache enabled clients in the office then the data is retrieved immediately, otherwise an additional request is made to the server (with MissingDataRequest=true) for the data. Note that this means some users will experience two requests and therefore slower response time until the distributed cache is primed with data.

It’s important at this point to understand the distinction between the BranchCache and the normal HTTP caching that operates under the browser. The browser cache will cache whole HTTP objects where possible as indicated by cache headers returned by the server. The BranchCache will operate regardless of HTTP cache-control headers and operates on a block level caching parts of files rather than whole files. That means you’ll get caching across multiple versions of files that have changed incrementally.

BranchCache Client Configuration

First up note that BranchCache client functionality is not available on all Windows versions. BranchCache functionality is only available in the Enterprise and Ultimate editions of Windows 7/8 which may restrict some potential usage.

There are a number of ways to configure BranchCache on the client including Group Policy and netsh commands, however the easiest is to use Powsershell. Launch an elevated Powershell command window and execute any of the Branch Cache Cmdlets.

- Enable-BCLocal: Sets up this client as a standalone BranchCache client; that is it will look in its own local cache for content which matches the hashes indicated by the server.

- Enable-BCDistributed: Sets up this client to broadcast out to the local network looking for other potential Distributed BranchCache clients.

- Enable-BCHostedClient: Sets up this client to look at a particular static server nominated to host the BranchCache cache.

While you can use a local cache, the real benefits come from distributed and hosted mode, where the browsing actions of a single employee can benefit the whole office. For instance if Employee A and Employee B are sitting in the same office and both browse to the same site then most of the content for Employee B will be retrieved direct from Employee A’s laptop rather than re-downloading from the server. That’s really powerful particularly where there are bandwidth constraints in the office and common sites that are used by all employees. But it requires that the web server serving the content participates in the Branchcache protocol by installing the BranchCache feature.

HTTP BranchCache on the Server

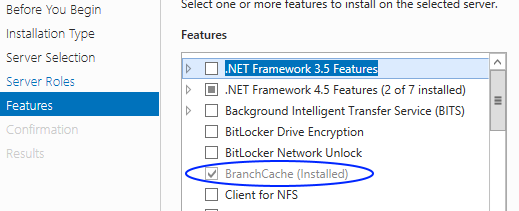

One of the things you lose when moving to the SharePoint Online service from an on premises server is the ability install components and features on the server including BranchCache. However, by routing requests via our Cloud Accelerator that feature is available again simply by installing the Windows Server BranchCache Feature.

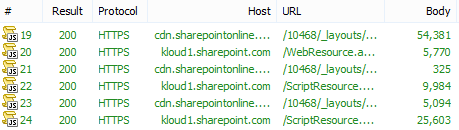

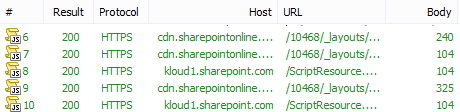

With the BranchCache feature installed on the Cloud Accelerator immediately turns the SharePoint Online service into a BranchCache enabled service so the size of the Body items downloaded from to the browser goes from this:

To this:

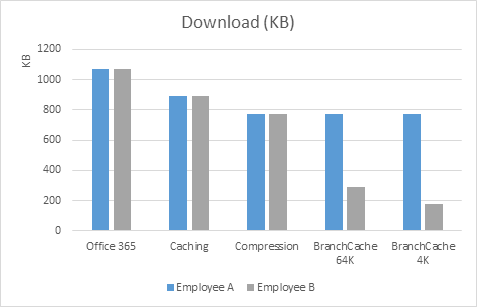

So there are some restrictions and configuration. First, you won’t normally see any peerdist hash responses for content body size less than 64KB. Also you’ll need a latency of about 70mS between client and server before BranchCache bothers stepping in. Actually you can change these parameters but it’s not obvious from the public API’s. The settings are stored at this registry key (HKEY_LOCAL_MACHINE\SYSTEM\ControlSet001\Services\PeerDistKM\Parameters) which will be picked up next time you start the BranchCache service on the server. Changing these parameters can have a big effect on performance and depend on the exact nature of the bandwidth or latency environment the clients are operating in. In the above example I changed the MinContentLength from the default 64K (which would miss most of the content from SharePoint) to 4K. The effect of changing the minimum content size to 4K is quite dramatic on bandwidth but will penalise those on a high latency link due to the multiple requests for many small pieces of data not already available in your cache peers.

The following chart shows the effect of our Cloud Accelerator on the SharePoint Online home page for two employees in a single office. Employee A browses to the site first, then Employee B on another BranchCache enabled client browses to the same page.

Where:

|

So while adopting a cloud based service is often a cost effective solution for businesses, if the result negatively impacts users and user experience then it’s unlikely to gather acceptance and may actually be avoided in preference for old on-premises habits like local files shares and USB drives. The Cloud Accelerator gives us back the ownership of the traffic and the ability to implement powerful features to bring content closer to users. Next post we’ll show how the accelerator can be scaled out ready for production.

Comments are closed.