Many years ago, back in Uni, I saw 2 guys in a computer lab writing a whole programming assignment without running it even once. The program was of relatively decent size written in C and consequently there were hundreds of compilation errors. That’s so silly, I thought…

After graduation I used to be a C++ programmer. The syntax sometimes was quite tricky and you would often compile after every new line of code. Sometimes, you would dare to write a whole function, just to find 10 compilation errors.

Since then the way I code has changed with help of modern IDE, Visual Studio as well as ReSharper – a productivity plugin. I never get to use just a simple text editor to write a program anymore. Real time static code analysis allows writing code while checking for compilation errors in the current file. With abundance of automatic refactorings a developer can efficiently manipulate a large amount of code. However, there might be a compilation error in another part of the solution, you still need to run unit tests, debug etc.

Now, for quite some time I’ve been using a new tool called NCrunch – a real time code coverage tool. The idea is simple: it builds Visual Studio solution and runs all available unit and integration tests automatically in the background while you are still typing. There is no need even to save a file. Initially, I thought to give it a go, as it was an innovative way to quickly see the code coverage. However, it turned out to be much more than that…

As my code has a fair amount of automated unit and integration test coverage, the entire system becomes “alive”. Combination of Visual Studio, ReSharper and NCrunch creates quite an extraordinary experience. Not only do I not build my solution too often these days, I only occasionally need to debug my code.

While typing you are being notified of any compilation error, any broken logic, any not registered component in DI container, any db error, etc. (assuming there is automated test to cover those scenarios)

The new experience reminds me of those guys from the uni lab, who never got to run their program before finishing the entire assignment. Not only technologies and development practices are evolving, but the entire coding experience is evolving too. In the past I have followed the pattern: code – build – code – build – debug – code…. Now my development pattern is: code – code – code – code – deploy. (I still need to debug occasionally)

There are additional, interesting projects promising to further revolutionize the ways we interact with computers through programming languages. One such idea is real time state output of a method while it is being written. Here is amazing video by Bret Victor – Inventing on Principle: http://vimeo.com/36579366. There was one attempt to achieve the same in c# code. http://ermau.com/making-instant-csharp-viable-visualization/ https://github.com/ermau/Instant It looks very promising for algorithms development, but unfortunately, there are no solid commercial products available yet.

Below are some snapshots of nCrunch. For more details go to www.ncrunch.net:

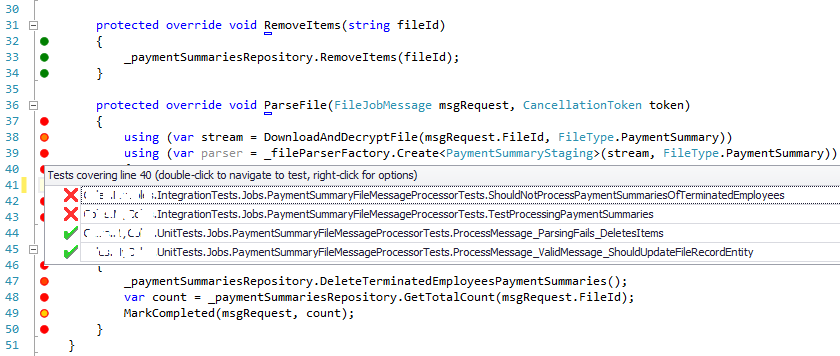

1) By clicking on a dot you can see a list of all tests covering that line, passing and failing.

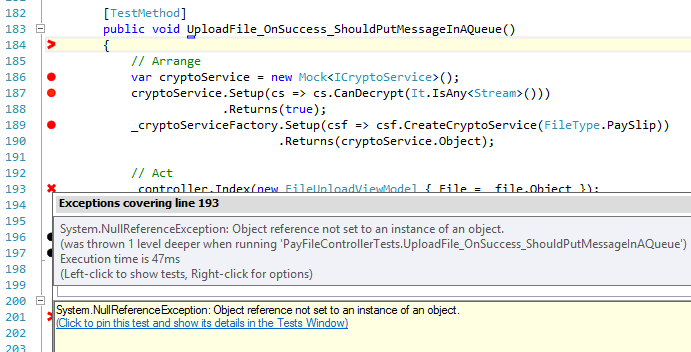

2) By clicking on a “x” you can see where the code failed

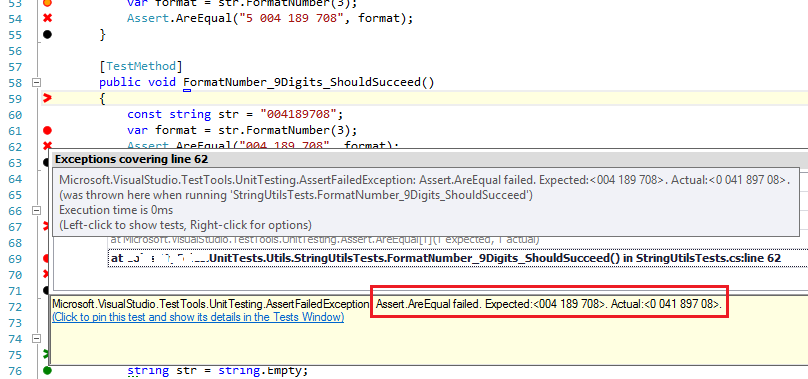

3). The test’s assertion failure is clearly seen below:

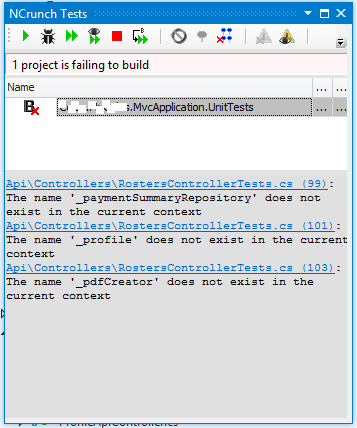

4) Compilation errors are indicated in a following windows:

NCrunch is being actively developed and maintained. Give it a try!

I would certainly echo your sentiments here about the value of nCrunch, which I have also been using for a while now. For anyone using a TDD type of approach to coding, or wanting a tool that tends to push you in the right direction nCrunch is great. It is so nice to simple code away and every now and then pause, wait a few seconds and see the red or green appear.

About the only issue I’ve run into the ol’ performance problem. Once you have Visual Studio started, with Resharper, and nCrunch running, even a capable machine can become quite sluggish at times. For that reason, I rarely have nCrunch running DI container or database tests, and tend to stick fairly strictly to unit tests. Having said that, I have occasionally run it all with in-memory RavenDb tests going which was bearable, given the pay-off for having the tests running all the time.

Thanks for your feedback!

Out of 500 tests in my current project, there are a few dozens integration tests, including database, IoC, Azure Storage in compute emulator and even some tests that call SharePointOnline services. However, I do make sure that each integration test doesn’t run longer than 1 or 2 seconds on my machine. Hardware is important as well; I’ve got 8 core, 16GB and SSD. As a result, I don’t feel any performance degradation in Visual Studio running resharper.

nCrunch looked neat when I saw a free demoware version of it last year; unfortunately my worries about pricing (TBD then) have came true. $160 is well above what I’m willing to spend out of pocket; and $290 is enough to make the bean counters in corporate IT choke.

When nCrunch licencing scheme was introduced I was quite disappointed as well. But if you write unit tests you need a code coverage tool. So, what are the alternatives? DotCover http://www.jetbrains.com/dotcover/buy/index.jsp, for instance, is $100. But nCrunch is not just a fancy code coverage tool, as I described in this post, it’s much more than that. So, for extra $60 it’s quite a fare deal. Visual studio has a default code coverage tool. (Depends on your licence)

In addition, there is a free Mighty-Moose tool. http://continuoustests.com/.

But check out the comparison between two: http://blog.diktator.org/index.php/2012/10/19/continuous-testing-mighty-moose-vs-ncrunch/

As a stand alone code coverage tool i’m using OpenCover, ReportGen, and a bit of scripting glue to give a one click route to a coverage report in my browser. Getting it in my IDE would be nice; but even dotcover’s too expensive to buy out of pocket.

Based on the that comparison MM’s idiosyncrasies are enough that I’d rate it as useless. “You should spend months refactoring all your legacy code so it’s complaint with the current buzzwords and will play nicely with my tool” is laughably divorced from the real world.

As for Visual Studio’s version, since I don’t have that option in my copy I assume it’s a Premium/Ultimate feature; even if Corporate IT were to give me a $5k blank check I’d put it to stuff other than upgrading my MSDN sub. I’ve yet to see anything that looks like even $1k or $2k of value in the upgrade.